Integrate infrastructure and application monitoring for faster troubleshooting

One spot for infra and APM. No place for downtime.

- View CPU and memory for hosts, containers, and VMs within APM to easily detect under-provisioned resources

- Quickly correlate drops in performance with dynamic charts showing host and APM metrics

- Surface kernel-level TCP and DNS metrics (handshake latency, retransmissions, DNS failures) essential to pinpointing root cause

- Visualize relationships and dependencies across infra and apps to detect root cause faster

- Eliminate siloed teams, tools, and data and ensure higher application uptime

Proactively monitor your entire estate

- See status of all hosts, apps, events, and alert activity to understand overall system health

- Detect health patterns at a glance with a high density overview of all your entities

- Easily track deviations across throughput, response time, and errors

- Quickly assess current software and uncover outdated software or problematic settings

Quantify the impact radius of every incident

- Analyze impacts of app deployments on hosts with change tracking embedded directly in infra

- Monitor host and config changes to reduce the impacts to the health and behavior of your applications

- Filter and compare host performance with software change events to determine root causes fast

- Uncover entity relationships to pinpoint issue sources using automap

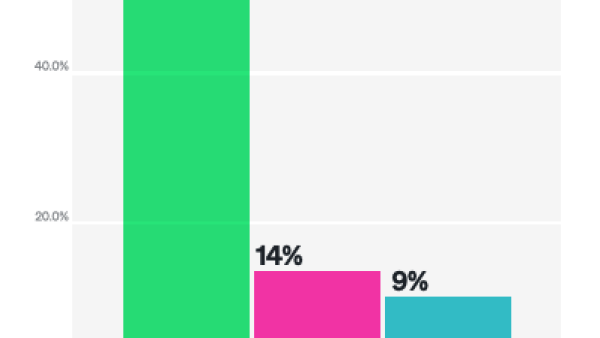

Get 3x the value, with no peak billing

- Only pay for what you use, with our customer-centric, consumption-based pricing

- Never pay additional charges for custom metrics and third-party data

- Pay one-third the cost of competitors and monitor 100% of your stack

- Start viewing all your telemetry in one place, quickly and affordably with 250+ infra quickstarts

has us open.

Customer Stories

Infrastructure Monitoring FAQs

Infrastructure monitoring offers flexible, dynamic observability of your entire infrastructure, from services running in the cloud or on dedicated hosts to containers running in Kubernetes. Correlate the health and performance of all your hosts to application context, logs, and configuration changes for successful observability.

Operations teams need easier ways to troubleshoot complex systems, prioritize where to focus, and identify root causes faster.

The first five tasks to get you started are:

- Install the infrastructure agent for Linux, macOS, and Windows operating systems.

- Establish cloud integrations for Amazon Web Services (AWS), Azure, and Google Cloud Platform.

- Check out our on-host integrations for MySQL, NGINX, Cassandra, and Kafka. Include Kubernetes and Prometheus integrations.

- Integrate host logs with the infrastructure agent.

- Create custom dashboards to bring all related telemetry together.

Yes. With New Relic infrastructure monitoring, you get deep visibility across your on-premises, cloud, hybrid, and even in multi-cloud environments.

New Relic infrastructure monitoring supports integrations to AWS, Microsoft Azure, and Google Cloud Platform cloud vendors.

Our infrastructure agent supports many operating systems and can be deployed anywhere with internet visibility to New Relic.

Golden metrics and signals are bits of information about an entity that we consider to be the most important for that entity. Our infrastructure monitoring experience helps you analyze related entities, logs, alerts, golden signals, network metrics (e.g., TCP handshake latency, retransmissions, DNS failures), processes, and storage in context on one unified platform to identify root causes and resolve issues faster.