Observability is about understanding a system’s performance from the data it generates. It’s a practice that enables engineers to quickly analyze system behavior and take proactive measures to boost performance and reliability. Observability takes the long-established practice of “monitoring” to a higher degree of insight into your systems.

Observability platforms provide a centralized way to collect, store, analyze, and visualize data. These data include metrics, events, logs, and traces (MELT), which provide a connected real-time view of all operational data in a software system. Observability platforms also provide engineers with the flexibility to explore applications and infrastructure by asking critical questions. As a result, engineers gain deeper insight into system behavior, enabling more informed decisions that drive significant improvements in system performance and reliability.

Pillars of observability

Gaining insight into your system’s behavior comes from four fundamental data types, or observability pillars, in an observability platform. Each pillar of observability offers its own distinct value into how your systems are functioning:

- Metrics are numerical values of something being measured at an instant in time. At minimum, metrics should have a time stamp, a value, and a name. Metrics allow you to gather and store lots of specific information that can be easily manipulated for analysis.

- Events are a richer data type that can be defined with many parameters besides time and value. How you define an event and the data it captures depends on what you need to understand about the system.

- Logs offer even more depth of information, as they typically record actions software takes as operations and tasks progress. Where an event might be triggered by a threshold violation, the software tasks executed to reach that threshold could be recorded in logs. Those records can then be searched and parsed in various ways to reveal important information about the system. Logs can contain both structured and unstructured data. Artificial intelligence (AI) tools that analyze log data are evolving to help system engineers more accurately predict system behaviors.

- Traces track connectivity across different operations. They can reveal how different systems or subsystems interact—whether they’re part of the organization’s infrastructure or connected to a completely different domain.

In addition to these observability pillars, other data—such as user experience, metadata, and other structured and unstructured content—can help you understand a system’s behavior.

Learn more about observability pillars.

How does observability work?

Once system engineers understand how to best leverage the advantages of each observability tool, they can define how to collect data from various endpoints and services across a multi-cloud environment. The observability platform then provides the analytics and visualization that engineers need for insight.

Endpoints can include data centers, Internet of Things (IoT) edge hardware, software, and cloud infrastructure components, such as containers, open source tools, and microservices. The observability platform reveals what’s occurring across the entire fleet of services, software, and hardware components, helping engineers resolve issues and optimize systems proactively and efficiently.

Why observability matters to modern digital business

Enterprise infrastructures continue to grow more complex from edge to the data center, with IoT, open-source, cloud-native microservices running on Kubernetes clusters, and private, public, and hybrid cloud infrastructure. By leveraging the expertise of distributed engineers and components, product teams can develop and deploy solutions with increased speed and efficiency. Simply monitoring the systems and understanding the data these systems produce is difficult—and costly—within the constraints of tight IT budgets.

Organizations today rely on DevOps teams, continuous delivery, and agile development, making the entire software delivery process faster than ever before. That accelerated development and faster release timelines can make it more difficult to detect issues when they arise.

Observability delivers new insights that help teams run more efficiently, optimize systems faster, and solve problems quicker—all impacting the organization’s bottom line.

The business case for implementing observability within your organization is clear. In the 2024 Observability Forecast, we found that 46% said observability improved system uptime and reliability. Even more telling, 58% said they received $5 million+ total value per year from their observability investment. We crunched the numbers: The median return on investment (ROI) for observability across all respondents was 4x (295%). In other words, for every $1 spent, respondents believe they receive $4 of value. In this context, understanding the role of business application monitoring becomes crucial, as it forms a key component of a comprehensive observability strategy.

Modern systems are complex, open-source, cloud-native microservices running on Kubernetes clusters and cloud infrastructure. They’re being developed and deployed faster than ever—by distributed teams and components.

Observability vs. monitoring: What’s the difference?

There’s a difference between observability and monitoring. To understand the difference between the two starts with really understanding the holes in “traditional monitoring” systems.

While traditional monitoring provided adequate information into legacy infrastructures, observability takes monitoring to a next level of insight, empowering IT and DevOps to manage, deliver, and optimize complex systems.

Monitoring

Monitoring presumes you have an idea of what might go wrong so you can monitor aspects of the system and be alerted of potential problems, such as limited network bandwidth. That typically means preconfiguring dashboards to gather data from limited touchpoints and alerts about potential performance issues. However, with more complex systems, it’s difficult to predict what problems you’ll encounter. For example, cloud-native environments are dynamic and complex. DevOps introduces potential new unknowns as software releases are accelerated.

Observability

With observability, teams must fully instrument the environment and software to provide rich data that can be analyzed and parsed in many ways—which are not necessarily expected or even possible beforehand. Observability data comes from not only metrics, events, logs, and traces, but can include richer information, such as metadata, user behavior, network topology, and mapping, as well as access to code-level details.

With rich data and an intelligent observability platform, IT and DevOps teams can flexibly investigate and explore the causes of issues beyond traditional monitoring.

Observability and monitoring

To be clear, observability doesn't eliminate the need for monitoring. Monitoring just becomes one of the techniques used to achieve observability.

Think of it this way: Observability (a noun) is the approach to how well you can understand your complex system. Monitoring (a verb) is an action you take to help in that approach.

Issues with conventional monitoring

Conventional monitoring can only track known unknowns. That means it won’t help you succeed in the complex world of microservices and distributed systems. It only tracks the things you know to ask about in advance (for example, “What’s my application’s throughput?”, “What does compute capacity look like?”, “Alert me when I exceed a certain error budget.”)

Observability is the key

Observability gives you the flexibility to understand patterns you hadn’t even thought about before, the unknown unknowns. It’s the power to not just know that something is wrong but to also understand why.

What are the components of better observability best practices?

Observability in modern systems has four fundamental pieces: metrics, events, logs, and traces, often referred to as MELT. But this alone will not provide you with the insights you need to build and operate better software systems. The following are areas of focus that can help you get the best out of observability:

Open Instrumentation

Open instrumentation means gathering telemetry data without being tied to vendor-specific entities that produce that data. Open instrumentation uses code (agents) to track and measure data flowing through your software application. Examples of open-source or telemetry data sources include vendor-agnostic observability frameworks like OpenTelemetry and Prometheus.

AIOps tools

To ensure that your modern infrastructure is always available, you need to accelerate incident response. AIOps solutions use machine learning (ML) models to automate IT operations processes such as correlating, aggregating, and prioritizing incident data. These tools help you eliminate false alarms, proactively detect issues, and accelerate mean time to resolution (MTTR).

Benefits of an observability tool

Improve customer experience

Observability tools empower engineers and developers to create better customer experiences despite the increasing complexity of the digital enterprise.

With observability, you can:

Collect, explore, alert, and correlate all telemetry data types

Understand user behavior

Deliver a better digital experience that delights your users

- Increase conversion, retention, and brand loyalty

Decrease downtime and improve MTTR

Observability also makes it easier to drive operating efficiencies and fuel innovation and growth. For example, a team can use an observability platform to understand critical incidents that occurred and proactively prevent them from recurring.

Improve team efficiency and innovation

When a new build is pushed out, teams can see into the application performance and then drill down into the reasons why an error rate spikes or application latency rises. They can see which particular node has the problem.

There are many other benefits, but here are a few we’ve heard from our customers:

- A single source of truth for operational data.

- Verified uptime and performance.

- An understanding of the real-time fluctuations of digital business performance.

- Better cross-team collaboration to troubleshoot and resolve issues faster.

- A culture of innovation.

- Greater operating efficiency to produce high-quality software at scale, accelerating time to market.

- Specific details to make better data-driven business decisions, and optimize investments.

Challenges of observability

While not a paradigm shift, observability requires thinking beyond traditional IT solutions and can present challenges to organizations.

Thinking beyond traditional monitoring

Delivering hardware and software products and services today means thinking carefully about the customer experience and all the systems that give customers the experience business developers want them to have. Observability requires organizations—from business units to IT and DevOps teams—to rethink how to gain insight into their complex infrastructure. That means developing a strategy beyond traditional monitoring and integrating observability everywhere.

Redesigning your data

If your data is siloed or purely structured, you’ll likely have to rethink that data, considering new sources, such as customer behavior and metadata and other unstructured data. Additionally, with modern multi-cloud deployments, data can stream quickly with incredible complexity and variety as cloud instances and containers are spun up and down in seconds.

Designing in instrumentation

With DevOps, teams are distributed and can deliver software faster. But they need to design in appropriate and necessary instrumentation, which requires additional design efforts to deliver the right telemetry data for observability.

Why businesses are adopting observability

The 2024 Observability Forecast found that 41% of the 1,700 respondents cited an increased focus on security, governance, risk, and compliance as the top strategy or trend driving the need for observability.

Other top drivers included the integration of business apps into workflows (35%), the adoption of AI technologies (41%), the development of cloud-native application architectures (31%), migration to a multi-cloud environment (28%), and an increased focus on customer experience management (29%).

The report also found that most (83%) of respondents indicated that their organizations had employed at least two best practices, but only 16% had employed five or more, such as the following:

- Software deployment uses continuous integration and continuous delivery (CI/CD) practices (40%)

- Infrastructure that’s provisioned and orchestrated using automation tooling (37%)

- Ability to query data on the fly (35%)

- Portions of incident response are automated (34%)

- Telemetry (metrics, events, logs, and traces) is unified in a single pane for consumption across teams (35%)

- Telemetry data includes business context to quantify the business impact of events and incidents (34%)

- Users broadly have access to telemetry data and visualizations (32%)

- Instrumentation is automated (25%)

- Telemetry is captured across the full tech stack (25%)

What to look for in observability tools?

Observability tools encompass a range of capabilities that monitor and analyze data from a wide cross-section of infrastructure components and software. So, while you consider the observability tools you need, keep in mind these critical aspects.

- Integration: Picking from a variety of open-source and commercially available observability tools will require careful integration with your entire stack—from languages to frameworks, hardware, and software.

- Ease of use: If tools aren’t easy to implement, they won’t get applied, and you’ll miss the advantages of the capabilities they offer.

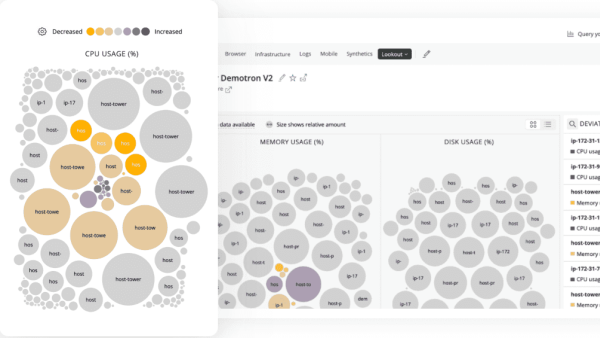

- Timely information: Real-time data presented in rich and intuitive dashboards with intelligent analysis and insight should be the goal of your observability tools.

- Insight, not just information: Visualization of data and analysis should be more than just graphs. Dashboards should present the context of the data so you can understand the problems clearly.

- Integrate AI: ML tools should be built-in to help automate troubleshooting and provide predictive analytics.

- A single source of truth: There are too many observability tools to manage individually. An observability platform should present the insight you need when you need it.

- Worth the money: There’s always an investment, whether it’s human investment to integrate and tune open-source tools or capital expenditure to implement commercial ones. The ROI—for both human and capital expense—must be worth it for the business. See how you can quantify observability for your business here.

The New Relic Intelligent Observability Platform comprises 775+ quickstart integrations and more than 30 capabilities with AI-powered insights built-in. The platform offers complete visibility across your entire stack and unlimited scalability, so you can future-proof your operations. New Relic’s all-in-one platform provides a single source of truth and eliminates data, tools, and team siloes.

New Relic was named a leader in the 2024 Gartner MagicQuadrant for Observability Platforms for the 12th consecutive time, emphasizing our ongoing commitment to delivering the best observability tools and capabilities for customers.

Most common observability use cases

Site reliability engineering (SRE) and ITOps teams use observability to keep complex systems running reliably — reducing downtime, accelerating incident detection, and improving mean time to resolution (MTTR). But observability delivers benefits across the entire software development lifecycle, not just operations.

Software engineering teams gain real-time visibility into system health, performance, and error patterns by analyzing outputs like events, metrics, logs, and traces. This enables faster root cause analysis and more confident deployments.

Improve software performance

For DevOps teams specifically, observability addresses the performance challenges that come with accelerating release cycles — giving teams the insight needed to identify bottlenecks, validate changes, and maintain application reliability at scale.

Read how one of South America’s largest software development organizations used observability to solve development challenges.

Simplify observability and improve web performance

As infrastructures grow more complex and companies implement monitoring and other tools trying to keep up with the growth, building multiple observability dashboards can lengthen times to synthesize the data being shown. A single source of truth with integrated tools can reduce engineers’ times to understand problems and help improve mean time to detection (MTTD), MTTR, and software performance.

Read how one company improved their core web metrics by consolidating multiple observability tools into a single platform.

Small teams and observability

Small teams can reap significant benefits from observability tools, particularly when faced with limited resources.

In the context of small cross-functional teams, where every member often wears multiple hats, the ability to monitor and analyze the performance of their systems is invaluable.

Observability tools provide a comprehensive view into the health and behavior of your applications and infrastructure, so your team can quickly identify and address issues. This is especially crucial because small teams may not have the luxury of dedicated personnel for each component of their stack.

By automating data collection and providing real-time insights, observability tools allow team members to focus their efforts more efficiently and reduce the time spent on reviewing and debugging individual servers.

If you’d like to see this in action, see how one of our customers improved efficiency significantly with New Relic.

Observability tools empower small teams to maximize their productivity, streamline troubleshooting, and ultimately deliver a more reliable and responsive user experience without straining their limited resources.

Observability and DevOps

Deployment frequency has increased dramatically with microservices. Too much is changing to realistically expect teams to predefine each and every possible failure mode in their environments. It's not just application code, but the infrastructure that supports it, and consumer behavior and demand.

Observability gives DevOps teams the flexibility they need to test their systems in production, ask questions, and investigate issues that they couldn’t originally predict.

Observability helps DevOps teams

- Establish clear service-level objectives (SLOs) and put instrumentation in place to prepare and join forces toward measurable success.

- Rally around team dashboards, orchestrate responses, and measure the effects of every change to enhance DevOps practices.

- Review progress, analyze application dependencies and infrastructure resources, and find ways to continually improve the experience for the users of their software.

TL;DR on observability

Observability provides a proactive approach to troubleshooting and optimizing software systems effectively. It offers a real-time and interconnected perspective on all operational data within a software system, enabling on-the-fly inquiries about applications and infrastructure.

In the modern era of complex systems developed by distributed teams, observability is essential. Observability goes beyond traditional monitoring by allowing engineers to understand not only what is wrong but also why.

It encompasses open instrumentation, correlation, context analysis, programmability, and AIOps tools to make sense of telemetry data. Observability tools enhance customer experience, reduce downtime, improve team efficiency, and foster a culture of innovation across all teams.

Get started with observability. Try New Relic.

Modern observability empowers software engineers and developers with a data-driven approach across the entire software lifecycle. It brings all telemetry—events, metrics, logs, and traces—into a unified data platform with powerful full-stack analysis tools that enable them to plan, build, deploy, and run great software to deliver great digital experiences that fuel innovation and growth.

Dive into the 2024 Observability Forecast to see insights and best practices uncovered in the research.

The best way to learn more about observability is to get hands-on experience with a modern, unified observability platform. Get started with New Relic.

The views expressed on this blog are those of the author and do not necessarily reflect the views of New Relic. Any solutions offered by the author are environment-specific and not part of the commercial solutions or support offered by New Relic. Please join us exclusively at the Explorers Hub (discuss.newrelic.com) for questions and support related to this blog post. This blog may contain links to content on third-party sites. By providing such links, New Relic does not adopt, guarantee, approve or endorse the information, views or products available on such sites.