Learn how to get Kubernetes observability by reading the Kubernetes monitoring guide.

What is Kubernetes?

You’ve likely heard the term Kubernetes with regard to containerization – but what is Kubernetes and what does it mean for reducing the manual effort associated with these processes? Originally developed by Google, Kubernetes is an open-source container orchestration platform designed to automate the deployment, scaling, and management of containerized applications

In fact, Kubernetes has established itself as the defacto standard for container orchestration and is the flagship project of the Cloud Native Computing Foundation (CNCF), backed by key players like Google, Amazon Web Services (AWS), Microsoft, IBM, Intel, Cisco, and Red Hat.

Although it’s commonly associated with the cloud, Kubernetes (sometimes referred to as K8s) operates in a variety of environments, from cloud-based to traditional servers to hybrid cloud infrastructure.

Container-based microservices architectures have profoundly changed the way development and operations teams test and deploy modern software. Containers help companies modernize by making it easier to scale and deploy applications, but containers have also introduced new challenges and more complexity by creating an entirely new infrastructure ecosystem.

Large and small software companies alike are now deploying thousands of container instances daily, and that’s a complexity of scale they have to manage. So how do they do it?

Kubernetes makes it easy to deploy and operate applications in a microservice architecture. It does so by creating an abstraction layer on top of a group of hosts, so that development teams can deploy their applications and let Kubernetes manage the following activities:

- Controlling resource consumption by application or team

- Evenly spreading application load across a host infrastructure

- Automatically load balancing requests across the different instances of an application

- Monitoring resource consumption and resource limits to automatically stop applications from consuming too many resources and restarting the applications again

- Moving an application instance from one host to another if there is a shortage of resources in a host, or if the host dies

- Automatically leveraging additional resources made available when a new host is added to the cluster

- Easily performing canary deployments and rollbacks

In this article, we’ll take a deeper dive into the realm of Kubernetes – what it is, why organizations are using it, and best practices for using this innovative, time-saving technology to its fullest potential.

What is Kubernetes used for and why use it?

Sure, you know what Kubernetes is, but what does Kubernetes do, specifically? Kubernetes automates various aspects of containerized applications, giving developers a scalable, simplified way to manage a network of complex applications across clustered servers.

Kubernetes streamlines container operations, distributing traffic for greater availability. Depending on how much traffic your site or server receives, Kubernetes allows applications to adapt to changes automatically, directing the right amount of workload resources on an as-needed basis. This helps reduce site downtime due to traffic spikes or network outages.

Kubernetes also aids in service discovery, helping clustered applications communicate with each other without prior knowledge of IP addresses or endpoint configuration. Additionally, Kubernetes consistently monitors the health of your containers, automatically detecting and replacing containers that have failed or restarting stalled containers to keep operations up and running smoothly.

So, why use Kubernetes?

As more and more organizations move to microservice and cloud native architectures that make use of containers, they’re looking for strong, proven platforms. Practitioners are using Kubernetes for four main reasons:

1. Kubernetes helps you move faster. Indeed, Kubernetes allows you to deliver a self-service platform-as-a-service (PaaS) that creates a hardware abstraction layer for development teams. Your development teams can quickly and efficiently request the resources they need. If they need more resources to handle additional load, they can get those just as quickly, since resources all come from an infrastructure shared across all your teams.

No more filling out forms to request new machines to run your application! Just provision and go, and take advantage of the tooling developed around Kubernetes for automating packaging, deployment, and testing. (We'll talk more about Helm in an upcoming section.)

2. Kubernetes is cost-efficient. Kubernetes and containers allow for much better resource utilization than hypervisors and VMs do. Because containers are so lightweight, they require less CPU and memory resources to run.

3. Kubernetes is cloud-agnostic. Kubernetes runs on Amazon Web Services (AWS), Microsoft Azure, and the Google Cloud Platform (GCP), and you can also run it on-premises. You can move workloads without having to redesign your applications or completely rethink your infrastructure—which lets you standardize on a platform and avoid vendor lock-in.

In fact, companies like Kublr, Cloud Foundry, and Rancher provide tooling to help you deploy and manage your Kubernetes cluster on-premises or on whatever cloud provider you want.

4. Cloud providers will manage Kubernetes for you. As noted earlier, Kubernetes is currently the clear standard for container orchestration tools. It should come as no surprise that major cloud providers are offering plenty of Kubernetes-as-a-service-offerings. Amazon EKS, Google Cloud Kubernetes Engine, Azure Kubernetes Service (AKS), Red Hat OpenShift, and IBM Cloud Kubernetes Service all provide full Kubernetes platform management, so you can focus on what matters most to you—shipping applications that delight your users.

So, how does Kubernetes work?

Within the Kubernetes hierarchy, there are clusters, nodes, and pods. These components work together to support containerized applications. A cluster is composed of various nodes responsible for running applications. Each node represents either a physical (on-premises) or virtual (cloud-based) machine that helps applications to function. Each node runs one or more pods – the smallest deployable units in Kubernetes. Each pod holds one or more containers. Think of pods as “containers for containers” that provide the necessary resources for containers to run smoothly, including information related to networking, storage, and scheduling.

That’s a slightly simplified explanation of how Kubernetes works. On a deeper level, the central component of Kubernetes is the cluster. A cluster is made up of many virtual or physical machines that each serve a specialized function either as a master or as a node. Each node hosts groups of one or more containers (which contain your applications), and the master communicates with nodes about when to create or destroy containers. At the same time, it tells nodes how to re-route traffic based on new container alignments.

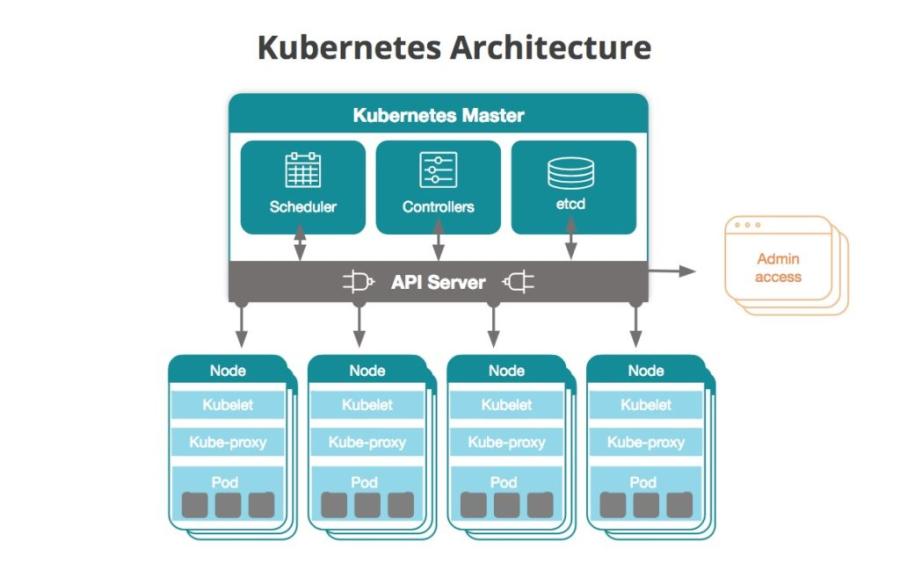

The following diagram depicts a general outline of a Kubernetes cluster:

The Kubernetes master

The Kubernetes master is the access point (or the control plane) from which administrators and other users interact with the cluster to manage the scheduling and deployment of containers. A cluster will always have at least one master, but may have more depending on the cluster’s replication pattern.

The master stores the state and configuration data for the entire cluster in etcd, a persistent and distributed key-value data store. Each node has access to etcd, and through it, nodes learn how to maintain the configurations of the containers they’re running. You can run etcd on the Kubernetes master, or in standalone configurations.

Masters communicate with the rest of the cluster through the kube-apiserver, the main access point to the control plane. For example, the kube-apiserver makes sure that configurations in etcd match with configurations of containers deployed in the cluster.

The kube-controller-manager handles control loops that manage the state of the cluster through the Kubernetes API server. Deployments, replicas, and nodes all have controls handled by this service. For example, the node controller is responsible for registering a node and monitoring its health throughout its lifecycle.

Node workloads in the cluster are tracked and managed by the kube-scheduler. This service keeps track of the capacity and resources of nodes and assigns work to nodes based on their availability.

The cloud-controller-manager is a service running in Kubernetes that helps keep it cloud-agnostic. The cloud-controller-manager serves as an abstraction layer between the APIs and tools of a cloud provider (for example, storage volumes or load balancers) and their representational counterparts in Kubernetes.

Nodes

All nodes in a Kubernetes cluster must be configured with a container runtime, which is usually Docker. The container runtime starts and manages the containers as Kubernetes deploys them to nodes in the cluster. Your applications (web servers, databases, API servers, etc.) run inside the containers.

Each Kubernetes node runs an agent process called a kubelet that is responsible for managing the state of the node: starting, stopping, and maintaining application containers based on instructions from the control plane. The kubelet collects performance and health information from the node, pods, and containers that it runs. It shares that information with the control plane to help it make scheduling decisions.

The kube-proxy is a network proxy that runs on nodes in the cluster. It also works as a load balancer for services running on a node.

The basic scheduling unit is a pod, which consists of one or more containers guaranteed to be co-located on the host machine and can share resources. Each pod is assigned a unique IP address within the cluster, allowing the application to use ports without conflict.

You describe the desired state of the containers in a pod through a YAML or JSON object called a Pod Spec. These objects are passed to the kubelet through the API server.

A pod can define one or more volumes, such as a local disk or network disk, and expose them to the containers in the pod, which allows different containers to share storage space. For example, volumes can be used when one container downloads content and another container uploads that content somewhere else. Since containers inside pods are often ephemeral, Kubernetes offers a type of load balancer, called a service, to simplify sending requests to a group of pods. A service targets a logical set of pods selected based on labels (explained below). By default, services can be accessed only from within the cluster, but you can enable public access to them as well if you want them to receive requests from outside the cluster.

Deployments and replicas

A deployment is a YAML object that defines the pods and the number of container instances, called replicas, for each pod. You define the number of replicas you want to have running in the cluster with a ReplicaSet, which is part of the deployment object. So, for example, if a node running a pod dies, the replica set ensures that another pod is scheduled on another available node.

A DaemonSet deploys and runs a specific daemon (in a pod) on nodes you specify. DaemonSets are most often used to provide services or maintenance to pods. For example, a DaemonSet is how New Relic Infrastructure gets the Infrastructure agent deployed across all nodes in a cluster.

Namespaces

Namespaces allow you to create virtual clusters on top of a physical cluster. Namespaces are intended for use in environments with many users spread across multiple teams or projects. They assign resource quotas and logically isolate cluster resources.

Labels

Labels are key/value pairs that you can assign to pods and other objects in Kubernetes. Labels allow Kubernetes operators to organize and select a subset of objects. For example, when monitoring Kubernetes objects, labels let you quickly drill down to the information you’re most interested in.

Stateful sets and persistent storage volumes

StatefulSets give you the ability to assign unique IDs to pods in case you need to move pods to other nodes, maintain networking between pods, or persist data between them. Similarly, persistent storage volumes provide storage resources for a cluster to which pods can request access as they’re deployed.

Other useful components

The following Kubernetes components are useful but not required for regular Kubernetes functionality.

Kubernetes DNS

Kubernetes provides this mechanism for DNS-based service discovery between pods. This DNS server works in addition to any other DNS servers you might use in your infrastructure.

Cluster-level logs

If you have a logging tool, you can integrate it with Kubernetes to extract and store application and system logs from within a cluster, written to standard output and standard error. If you want to use cluster-level logs, it’s important to note that Kubernetes does not provide native log storing; you must provide your own log storage solution.

Helm: managing Kubernetes applications

Helm is an application package management registry for Kubernetes, maintained by the CNCF. Helm charts are pre-configured software application resources you can download and deploy in your Kubernetes environment. According to a 2020 CNCF survey, 63% of respondents said Helm was the preferred package management tool for Kubernetes applications. Helm charts can help DevOps teams come up to speed more quickly with managing applications in Kubernetes. It allows them to use existing charts that they can share, version, and deploy into their development and production environments.

Kubernetes and Istio: a popular pairing

In a microservices architecture like those that run in Kubernetes, a service mesh is an infrastructure layer that allows your service instances to communicate with one another. The service mesh also lets you configure how your service instances perform critical actions such as service discovery, load balancing, data encryption, and authentication and authorization. Istio is one such service mesh, and current thinking from tech leaders, like Google and IBM, suggests they’re increasingly becoming inseparable.

The IBM Cloud team, for example, uses Istio to address the control, visibility, and security issues it has encountered while deploying Kubernetes at massive scale. More specifically, Istio helps IBM:

- Connect services together and control the flow of traffic

- Secure interactions between microservices with flexible authorization and authentication policies

- Provide a control point so IBM can manage services in production

- Observe what’s happening in their services, via an adapter that sends Istio data to New Relic—allowing it to monitor microservice performance data from Kubernetes alongside the application data it's already gathering

Challenges to Kubernetes adoption

The Kubernetes platform clearly has come a long way since it was first released. That kind of rapid growth, though, also involves occasional growing pains. Here are a few challenges with Kubernetes adoption:

1. The Kubernetes technology landscape can be confusing. One thing developers love about open-source technologies like Kubernetes is the potential for fast-paced innovation. But sometimes too much innovation creates confusion, especially when the central Kubernetes code base moves faster than users can keep up with it. Add a plethora of platforms and managed service providers, and it can be hard for new adopters to make sense of the landscape.

2. Forward-thinking dev and IT teams don’t always align with business priorities. When budgets are only allocated to maintain the status quo, it can be hard for teams to get funding to experiment with Kubernetes adoption initiatives, as such experiments often absorb a significant amount of time and team resources. Additionally, enterprise IT teams are often adverse to risk and slow to change.

3. Teams are still acquiring the skills required to leverage Kubernetes. It wasn’t until a few years ago that developers and IT operations folks had to readjust their practices to adopt containers—and now, they have to adopt container orchestration, as well. Enterprises hoping to adopt Kubernetes need to hire professionals who can code, as well as knowing how to manage operations and understand application architecture, storage, and data workflows.

4. Kubernetes can be difficult to manage. In fact, you can read any number of Kubernetes horror stories—everything from DNS outages to “a cascading failure of distributed systems”— in the Kubernetes Failure Stories GitHub repo.

Kubernetes best practices

Optimize your Kubernetes infrastructure for performance, reliability, security, and scalability by following these best practices:

- Resource requests and limits: Define resource requests and limits for containers. Requests specify the minimum resources required, while limits prevent containers from using excess resources. This ensures fair resource allocation and prevents resource contention.

- Liveness and readiness probes: Implement liveness and readiness probes in your containers. Liveness probes determine if a container is running, and readiness probes indicate when a container is ready to serve traffic. Properly configured probes enhance application reliability.

- Secrets management: Use Kubernetes Secrets to store sensitive information such as passwords and API keys. Avoid hardcoding secrets in configuration files or Docker images, enhancing security.

- Network policies: Implement network policies to control the communication between pods. Network policies help define rules for ingress and egress traffic, improving cluster security.

- RBAC (Role-based access control): Implement RBAC to restrict cluster access. Define roles and role bindings to provide the minimum necessary privileges to users and services, enhancing security.

- Immutable infrastructure: Treat your infrastructure as immutable. Avoid making changes directly to running containers. Instead, redeploy with the necessary modifications. Immutable infrastructure simplifies management and reduces the risk of configuration drift.

- Backup and disaster recovery: Regularly back up critical data and configurations. Implement disaster recovery plans to restore your cluster in case of failures.

- Horizontal pod autoscaling (HPA): Use horizontal pod autoscaling to automatically scale the number of pods in a deployment based on CPU utilization or custom metrics. HPA ensures optimal resource usage and application performance.

- Monitoring and logging: Set up monitoring and logging solutions to gain insights into cluster health and application behavior.

New Relic can support your Kubernetes journey

You need to fully understand the performance of your clusters and workloads, and you need the ability to do so fast and easily. With New Relic, you can troubleshoot faster by analyzing all your clusters in one user interface—without updating code, redeploying your apps, or enduring long standardization processes within your teams. Auto-telemetry with Pixie gives you instant visibility into your Kubernetes clusters and workloads in minutes.

The Kubernetes cluster explorer provides one place where you can analyze all your Kubernetes entities—nodes, namespaces, deployments, ReplicaSets, pods, containers, and workloads. Each slice of the “pie” represents a node, and each hexagon represents a pod. Select a pod to analyze the performance of your applications, including accessing related log files.

Analyze the performance of pods and applications

You can analyze each application’s distributed traces. By clicking on an individual span, you see related Kubernetes attributes (for example, related pods, clusters, and deployments).

And, you can also get distributed tracing data from applications running in your clusters.

Next steps

Get started today by deploying Auto-telemetry with Pixie (EU link).

This post was updated from a previous version published in July 2018.

The views expressed on this blog are those of the author and do not necessarily reflect the views of New Relic. Any solutions offered by the author are environment-specific and not part of the commercial solutions or support offered by New Relic. Please join us exclusively at the Explorers Hub (discuss.newrelic.com) for questions and support related to this blog post. This blog may contain links to content on third-party sites. By providing such links, New Relic does not adopt, guarantee, approve or endorse the information, views or products available on such sites.