MySQL is an open source relational database system that, like Linux, started its history as a personal side project. Over its 25 years of history, MySQL has gained significant traction, and today a broad range of companies, such as Sony and Uber, use it to run their multi-petabyte mission-critical databases.

In a way, MySQL has been a victim of its own success. It’s easy enough to run that developers may take it for granted and assume it doesn’t need supervision. In reality, MySQL is a complex system with a delicate balance you must monitor closely. It exposes a lot of useful metrics you can’t afford to miss because they highlight where bottlenecks are, when it’s time to upgrade, and what queries you should optimize.

Like most relational databases, MySQL organizes data using databases (also called schemas) and tables. However, MySQL has a unique modular architecture that lets you choose the best low-level storage engine for the job. MySQL exposes its inner workings in all these levels through dozens of metrics.

MySQL is also extremely flexible. You can run it as a single instance, as a primary-secondary cluster, thank to replication, or in multi-master mode. And third-party solutions like Vitess allow you to create horizontally-scaled clusters.

Key MySQL Metrics to Monitor

Let's start by reviewing some of the most important metrics to monitor in any MySQL instance. To view all available metrics, check out our MySQL integration docs (which we’ll walk through setting up below).

Uptime

It may sound self-evident, but many teams set alerts for server downtime while forgetting to monitor the MySQL process itself. When the database is down, you want to get notified immediately.

Connections

MySQL sets a hard limit on the number of simultaneous connections. When you reach it, new connections are blocked until someone disconnects.

MySQL allows 151 connections by default. Changing the limit is simple:

SET GLOBAL max_connections = 200;

Bear in mind that each connection needs at least 3MB, so always try to keep the number of connections as small as possible.

To determine the optimum number of connections to set, monitor three metrics:

net.maxUsedConnections: The maximum number of connections recorded since the database started. Use this value as a reference to setmax_connections.net.threadsConnected: The current number of active connections.net.connectionErrorsMaxConnectionsPerSecond: The number of connections failed per second due to themax_connectionslimit being reached. If the value is higher than zero, you need to either raise the limit, set up connection pools for your applications, or consider installing a load balancer like ProxySQL.

Network issues and buggy clients can also affect MySQL connections. Watch net.abortedClientsPerSecond to detect applications not closing connections properly. A high rate usually indicates problems in the network.

Memory usage

Databases need a lot of memory to work well, so monitoring memory is vital to keep your MySQL in top shape.

First, set up monitoring for the server to ensure it has enough RAM:

memoryFreeByesmemoryUsedBytesmemoryTotalByes

Then, on the MySQL side, monitor these metrics:

db.innodb.bufferPoolPagesTotal: The number of pages in-memory holding data. You want this number to be as high as possible to reduce disk activity and increase performance.db.innodb.bufferPoolReadsPerSecond: The number of pages not found in-memory that need to be retrieved from disk. If the value is more than 5-15% ofdb.innodb.bufferPoolPagesTotal, the database needs more memory. In that case, check the server RAM and increase thedb.innodb_buffer_pool_sizesetting on MySQL.db.innodb.logWaitsPerSecond: If this value is consistently high, it means that the log buffer is set too low. In that case, increaseinnodb_log_buffer_sizeuntil the problem goes away.

Storage speed

After memory, disk I/O speed is the most crucial factor for database performance. Even if the system has enough RAM to allocate the complete database, it’ll still need disk I/O to ensure transaction consistency.

The main database workload shapes disk activity. For Online Transaction Processing (OLTP) systems (e.g., systems used for online purchase processing), the following metrics should be smooth and steady. Peaks indicate possible bottlenecks and latency for your users. On the other hand, for Online Analytical Processing (OLAP) systems (e.g., systems used for budgeting and forecasting), uneven activity is a lot more common and should be expected.

To keep an eye on workload, monitor the following:

db.innodb.dataReadsPerSecond: The number of reads per second.db.innodb.dataWritesPerSecond: The number of writes per second.db.innodb.osLogWrittenBytesPerSecond: The transaction log throughput. This value is proportional to how much the data changes over time.

Query speed

Watch these metrics to monitor query speed and capture a baseline for your database:

query.questionsPerSecond: The number of queries sent by clients.query.queriesPerSecond: The total number of queries per second (QPS), including administrative commands and stored procedures. It measures the raw capacity of the database.query.maxExecutionTimeExceededPerSecond: The number of SELECT statements timed out per second. For OLTP workloads, you never want this value to exceed zero. If you find that your queries are timing out, optimize them.

Query optimization metrics

Query optimization is where you can make the most significant impact on MySQL performance.

To identify long-running queries, activate the MySQL slow query log:

SET GLOBAL slow_query_log = 1; SET GLOBAL slow_query_log_file = '/var/log/mysql/mysql-slow.log';

Then set a reasonable query time to capture the worst-behaving queries on a first pass:

// 3 seconds threshold SET GLOBAL long_query_time = 3;

As performance improves, you can reduce the query time and repeat the process.

Once the feature is active, monitor query.slowQueriesPerSecond, analyze the log, and use an EXPLAIN statement to find which queries do worse. Depending on the case, you may need to rewrite them, add or modify indexes, or restructure your tables.

Here are some additional metrics to monitor while optimizing queries.

Temporary Files and Tables

MySQL creates temporary files and tables on disk for operations such as GROUP BY, ORDER BY, or UNION that don’t fit in-memory. Since these can cause excessive disk I/O, keep a close watch on these metrics:

db.createdTmpDiskTablesPerSecondmeasures tables.db.createdTmpFilesPerSecondmeasures temporary files.

While it’s not always possible to reduce these values to zero, you can minimize temporary disk activity by adjusting sort_buffer_size and join_buffer_size, and rewriting queries.

Locks

MySQL uses table- and row-level locks to ensure data consistency. Poorly written queries and some administrative tasks can lead to long-running locks that block other clients. The main metrics for lock activity are db.tablesLocksWaitedPerSecond for tables, and db.innodb.rowLockTimeAvg and db.innodb.rowLockWaitsPerSecond for rows.

Missing indexes

MySQL uses indexes for filtering, sorting, and joining tables. When queries and table structures don’t line up, MySQL is forced to scan the whole table. This situation uses up a lot of extra memory and causes heavy disk I/O.

Monitor these metrics for details:

db.selectFullJoinPerSecondanddb.selectFullJoinRangePerSecondindicate whether your tables need additional indexes.db.innodb.bufferPoolReadAheadRndPerSecondhelps detect inefficient table-level reads.

Monitoring MySQL with New Relic

Our MySQL integration uses the New Relic Infrastructure agent to collect and send performance metrics from your MySQL database to our platform. You can see your database server’s health and analyze metric data so that you can easily find the source of any problems

The integration is compatible with MySQL version 5.6 or higher.

In the following example, we’ll show you how to set up Infrastructure Monitoring for an Ubuntu server running MySQL. If you have a different host OS, check the agent documentation for alternative instructions.

Note: You can also monitor MySQL as a service running in Kubernetes or ECS.

Install the agent and integration on an Ubuntu server

- From New Relic One, navigate to your account drop-down (in the top-right corner) and select Add more data.

- Select your operating system (in this case Ubuntu), and follow the prompts to get your license key and select your Ubuntu version.

- To deploy the Infrastructure agent and the MySQL integration, run the following commands on your server:

- Import Infrastructure agent GPG Key.

curl -s https://download.newrelic.com/infrastructure_agent/gpg/newrelic-infra.gpg | sudo apt-key add -

- Add the New Relic repository (view all distributions here).

printf "deb [arch=amd64] https://download.newrelic.com/infrastructure_agent/linux/apt bionic main" | sudo tee -a /etc/apt/sources.list.d/newrelic-infra.list

- Install the infrastructure agent (

newrelic-infra) and MySQL integration (nri-mysql).

sudo apt-get update && sudo apt-get install -y newrelic-infra nri-mysql

- Import Infrastructure agent GPG Key.

Configure the MySQL integration

- Add a monitoring user in MySQL.

mysql -e "CREATE USER 'newrelic'@'localhost' IDENTIFIED BY 'MONITOR_USER_PASSWORD';" mysql -e "GRANT REPLICATION CLIENT ON *.* TO 'newrelic'@'localhost';"

- Configure the MySQL integration.

cd /etc/newrelic-infra/integrations.d sudo cp mysql-config.yml.sample mysql-config.yml sudo nano mysql-config.yml

- Fill in the password.hostname:

hostname: localhost port: 3306 username: newrelic password: MONITOR_USER_PASSWORD

- Set

remote_monitoringtotrue. - Capture

extended_metricsandextended_innodb_metrics.

extended_metrics: 1 extended_innodb_metrics: 1

- If you have MyISAM tables, also set

extended_myisam_metrics:

extended_myisam_metrics: 1

- Restart the infrastructure agent to complete the setup.systemctl restart newrelic-infra

A full list of configuration options is available in our MySQL integration documentation.

View MySQL data in New Relic

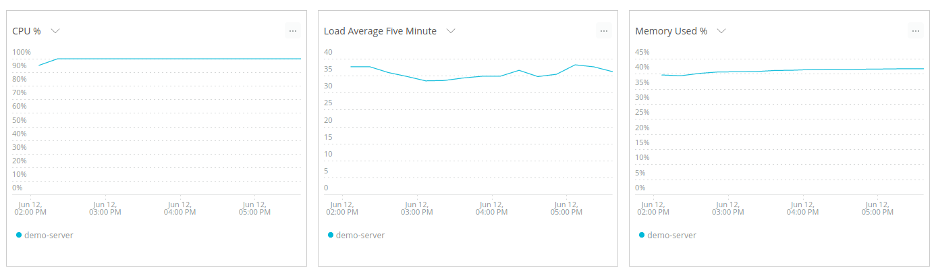

From New Relic One, navigate to Infrastructure to see the incoming data about your servers.

To start monitoring your MySQL databases, navigate to Infrastructure > Third-party Services > MySQL Dashboard.

Here are a few example charts created based on our integration’s configuration:

- A QPS graph measures the raw efficiency of your database

- The Slow Queries chart lets you know when it’s time to optimize your database

- Use the Max Connections graph to fine tune

max_connections - Use the I/O activity for the network and disk to find bottlenecks in your system

To create more advanced charts and custom dashboards, check out the data explorer.

From integration to observability

If data is the application’s lifeblood, then the database is its heart. Reliable database performance is vital in any business. By monitoring a few key metrics, you can better understand how your MySQL servers are functioning.

The MySQL integration is open source software. That means you can browse its source code and send improvements, or create your own fork and build it.

Make our MySQL integration an essential part of your observability stack.

Check out our full list of on-host integrations for more.

If you are ready to take control of your databases, sign up for 100GB of ingest per month and one Full-Stack Observability user license—free forever!

The views expressed on this blog are those of the author and do not necessarily reflect the views of New Relic. Any solutions offered by the author are environment-specific and not part of the commercial solutions or support offered by New Relic. Please join us exclusively at the Explorers Hub (discuss.newrelic.com) for questions and support related to this blog post. This blog may contain links to content on third-party sites. By providing such links, New Relic does not adopt, guarantee, approve or endorse the information, views or products available on such sites.