Conquer cloud complexity

with New Relic and AWS.

Correlate the performance of your entire AWS environment in one place. Solve performance issues faster. Manage cloud resources and costs more effectively.

0

1

2

3

4

5

START FASTER

Faster AWS onboarding, and quicker time to value.

- One-step onboarding for all your AWS telemetry using CloudFormation.

- Connect all your AWS accounts to New Relic with multi-account onboarding.

- Agentless EC2 monitoring provides instant insights without the need for agent installation and configuration.

TROUBLESHOOT FASTER

See data in context to resolve issues faster.

- Automatically correlate performance data across apps, infra, and services for faster troubleshooting.

- Analyze metrics, traces, logs, and security signals across multiple AWS accounts on one platform.

- Automatically view relationships and dependencies across your AWS environment in a service map.

- Know system-wide health across hosts, cloud resources, containers, logs, services, and applications.

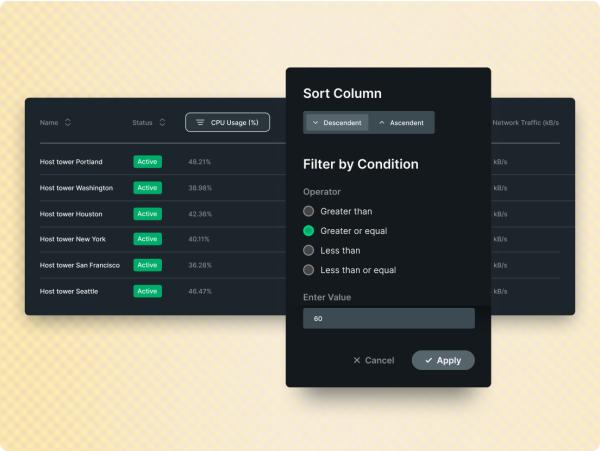

PROVISION SERVICES PRECISELY

Uncover over-utilized services and save.

- Analyze performance metrics across apps, infra, and AWS services to optimize resource utilization.

- Track and allocate AWS resources to ensure cost-effective performance under any load.

- Easily pinpoint the best instance type based on real usage.

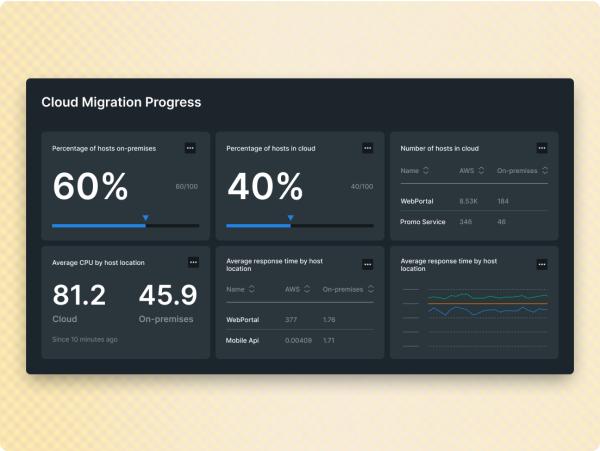

CLOUD MIGRATION

Plan, migrate, modernize. A roadmap for every stage.

- Achieve cloud adoption with fewer growing pains using New Relic AWS Application Migration Service integration.

- Accelerate and de-risk migration by validating progress with before, during, and after measurements.

- Scale quickly and easily while lowering costs using real-time analytics and KPIs.

The data points to higher ROI.

1

2

3

100+ AWS integrations. Start now for free.

Want to learn more?

Dig into these resources.

Get more from AWS with New Relic.