Operations professionals, site reliability engineers, and anyone else who lives and breathes the practice of systems monitoring, take pride in knowing what to monitor, both through experience and the shared knowledge of like-minded professionals. With expertly crafted alerts, thresholds, dynamic baselines, and other elegant solutions, they deftly defy the gremlins that stalk uptime and performance goals using data collected at intervals typically ranging from 60 seconds to 15 minutes.

What if there was a better way?

Zack Mutchler is a monitoring practitioner with years of experience as both an operator and professional services consultant. After spending over 5 years as a SolarWinds Community MVP and working with hundreds of SolarWinds customers globally, Zack joined New Relic, focusing on enabling fellow Ops engineers to level up their observability.

This two-part blog series (read part 1) explores why you’d want to improve your observability with SolarWinds, and how to do it.

As an engineer, you understand that it doesn’t matter what you think you know—it matters what you can prove with data. You have tons of data at your fingertips, sourced from various cloud and on-premises technologies, as well as from various monitoring and logging tools you’ve deployed across your environment. Siloed data, while common, causes a lot of pain for teams working to improve their triage and incident response processes.

Now add in siloed teams, each with their own toolsets, and it’s no wonder precious error budgets quickly disappear when chaos strikes—as each team spins its wheels against a ticking clock.

In most cases, this is where we turn to monitoring; but where monitoring tells us WHEN problems occur, observability tells us WHY and WHERE they exist and allows us to start asking questions we didn’t know we needed to ask. This is especially helpful in modern environments, from small office remote sites, to traditional data centers, and into cloud spaces where newer software architectures and the ever-expanding landscape of cloud native technologies are creating solutions in ways we could have never imagined.

And let’s face it: There are situations in your unique environment that are...well...unique; and that’s OK. With the right observability platform in place, you can keep your environment unique and ensure your incident response workflows don’t suffer. For instance, do you need to collect data from a legacy open source monitoring tool used by the Unix team? Do you want to add visibility into your deployment pipeline events to correlate them with statistics from your Kubernetes cluster? Do you need to monitor alerts generated by your network monitoring tool? New Relic can do all that and more.

Drawing on my experience as both a New Relic customer and SolarWinds MVP—and now as a New Relic Ops/SRE Strategy Consultant—I’ll demonstrate how you can use telemetry from the Solarwinds Orion platform, such as alerts and network statistics, to increase the value of your data and the agility of your incident response workflows.

About the Orion Alerting Engine

SolarWinds Orion is the underlying platform for a suite of IT performance monitoring products. Consisting of multiple core services such as the Reporting and Alerting Engines, it can be described as the command and control center for the dozen or so products that SolarWinds provides to serve traditional operations monitoring needs.

Since I want to provide you with the skills and resources you need to create an integrated experience curated to your specific requirements, I’m going to start with the Orion Alerting Engine. This will give you a perfect foundation to get started, as Orion admins have generally put effort into pruning their alerting strategy to actionable events. Pulling data from the Alerting Engine can provide you with valuable insights within the New Relic platform, without excessive noise.

To show you how it all works, I’ll demonstrate two options for sending Orion alerts data to New Relic:

- Option A: Build an Orion alert action with a PowerShell script

- Option B: Build a custom integration with New Relic Flex

Option A: Build an Orion alert action with a PowerShell script

Using this method, you push the data from the Orion Alerting Engine directly into the New Relic Events API using alert actions. This technique is preferred as you’ll be able to identify the specific alerts you want to see, add support for trigger and reset events, and customize the payload of each event, all of which will give you better context for various objects that are being alerted on. For instance, you could enhance alerts on a critical network interface by providing data relative to utilization statistics and metadata showing a site code. In contrast, an alert on the status of your primary VPN tunnel for remote workers would call for a different set of data.

At a high level, to set up an alert action for Orion, you’ll follow these steps:

- Identify target alert conditions in Orion.

- Create a New Relic Events API Insert Key.

- Collect your New Relic Account ID.

- Build a PowerShell script that Orion will execute to send data to New Relic. (This step was required at the time of writing, as the Orion alert action for HTTP POST didn't support authentication headers. This feature has been added as of Orion Platform 2020.2, released on June 4, 2020, and posting directly to the Events API without a script is now possible as well.)

- Build an Orion alert action of type: “Execute an external program”.

- Assign the alert action to target alert conditions.

Example PowerShell Script

To help you build your PowerShell script, I’ve provided a basic example where I’m focusing only on variables that would be common across all alert types. Check SolarWinds’ documentation for a breakdown of some of the more common variables.

Param(

[ Parameter( Mandatory = $true ) ] [ ValidateSet( "Trigger","Reset" ) ] [ string ] $ActionType,

[ Parameter( Mandatory = $true ) ] [ string ] $AccountID,

[ Parameter( Mandatory = $true ) ] [ string ] $InsertKey,

[ Parameter( Mandatory = $true ) ] [ string ] $AlertName,

[ Parameter( Mandatory = $true ) ] [ string ] $AlertMessage,

[ Parameter( Mandatory = $true ) ] [ string ] $AlertingEntity,

[ Parameter( Mandatory = $true ) ] [ string ] $EntityType

)

# Build our event payload

$payload = @"

[

{

"eventType":"solarwinds_alerts",

"swAlert.alertActionType":"$ActionType",

"swAlert.alertName":"$AlertName",

"swAlert.alertMessage":"$AlertMessage",

"swAlert.alertingEntity":"$AlertingEntity",

"swAlert.alertingEntityType":"$EntityType"

}

]

"@

# Set our target URI with account ID

$uri = "https://insights-collector.newrelic.com/v1/accounts/$AccountID/events"

# Build our HTTP POST Headers

$headers = @{}

$headers.Add("X-Insert-Key", "$InsertKey")

$headers.Add("Content-Encoding", "gzip")

# Setup encoding and compress our payload

$encoding = [System.Text.Encoding]::UTF8

$enc_data = $encoding.GetBytes($payload)

$output = [System.IO.MemoryStream]::new()

$gzipStream = New-Object System.IO.Compression.GzipStream $output, ([IO.Compression.CompressionMode]::Compress)

$gzipStream.Write($enc_data, 0, $enc_data.Length)

$gzipStream.Close()

$gzipBody = $output.ToArray()

# POST to the Events API

Invoke-WebRequest -Headers $headers -Method Post -Body $gzipBody -Uri $uriIn this script, we define our parameters in the Param() block, and then build our JSON payload; for the payload, we set the Events API URL for our account, build our authorization header with the Insert API Key, and finally compress the payload and send the data to the Events API.

(Note: There are multiple approaches and scripting languages we could have used in place of PowerShell. The key element you’ll want to focus on is allowing your script to accept any of the variables available in the Alerting Engine so you can fully enrich your data. Feel free to experiment and send me a message on the Explorer’s Hub. I love seeing new ideas.)

Once you’ve finished building/customizing your own version of this script, save it locally to your Orion Primary Polling Engine. You can technically save to a shared remote location, but in my experience, chasing down the right permissions for the Alerting Engine to execute a remote script makes the centralized model a lot more painful.

Build the alert action

To build our alert action in Orion, place the following command in the “Network path to external program” field in the “Execute an external program” alert action type, and replace ACCOUNT_ID and INSERT_KEY with the data we collected earlier.

Tip: You can also hard-code these values into your script if the idea of maintaining numerous script arguments in your command sounds painful.

powershell.exe -ExecutionPolicy Unrestricted -NoProfile -File C:\Scripts\newrelic-solarwinds-alert-action.ps1 -ActionType Trigger -AccountID ACCOUNT_ID -InsertKey "INSERT_KEY" -AlertName "${N=Alerting;M=AlertName}" -AlertMessage "${N=Alerting;M=AlertMessage}" -AlertingEntity "${N=SwisEntity;M=Caption}" -EntityType "${N=Alerting;M=ObjectType}"Here’s a breakdown of the command:

exe- Inform Windows CMD to execute this file using PowerShell

-ExecutionPolicy Unrestricted- Temporarily remove Execution Policy restrictions

-NoProfile- Load PowerShell without any Profile settings

-File C:\Scripts\newrelic-solarwinds-alert-action.ps1- The path to the script file

-ActionType Trigger- Script Argument: Treat this as a Trigger or Reset action

-AccountID ACCOUNT_ID- Script Argument: Passes your New Relic Account ID to the script

-InsertKey "INSERT_KEY"- Script Argument: Passes your New Relic Events API Key

-AlertName "${N=Alerting;M=AlertName}"- Script Argument: Orion Alert Engine Variable for the Alert Name

-AlertMessage "${N=Alerting;M=AlertMessage}"- Script Argument: Orion Alert Engine Variable for the Alert Message

-AlertingEntity "${N=SwisEntity;M=Caption}"- Script Argument: Orion Alert Engine Variable for the Alerting Entity Name

-EntityType "${N=Alerting;M=ObjectType}"- Script Argument: Orion Alert Engine Variable for the Entity Type (Node, Interface, etc.)

After you assign the newly created action to your target alerts, and have some (hopefully test) triggered alerts, you can query the data in New Relic using the New Relic Query Language (NRQL):

SELECT timestamp, `swAlert.alertActionType`, `swAlert.alertName`, `swAlert.alertMessage`, `swAlert.alertingEntity`, `swAlert.alertingEntityType` FROM solarwinds_alerts

Option B: Collect Orion alerts data with a Flex integration

With this method, you’ll use New Relic Flex to remotely call the Orion REST API and query the data.

(Note that New Relic Flex requires you to install the New Relic Infrastructure agent on a server in your Orion architecture. See more below.)

The primary limitation here is that you lose a lot of your customization options, but the data itself is still extremely powerful. I’ve created an example YAML configuration in the public GitHub repository for Flex that you can use as a starting point.

At a high level, to access the Orion REST API from Flex, you’ll need to:

- Create an Orion Individual Account with read-only access that you can use to access the Orion REST API.

- This is a firm requirement as Windows account login is not currently supported by the Orion REST API.

- Build a Base64 encoded string to use in your authentication header.

- Check this gist for multiple examples for different languages. I’ve also provided a PowerShell snippet in the example on GitHub.

- Identify the server on which you’ll host your Flex configurations. This is ideally a server in the Orion architecture (Primary, Additional Poller, or Additional Web Server), but will work from any host with a New Relic Infrastructure Agent that has network connectivity to your Orion architecture via TCP port 17778.

The events generated will be very similar to the ones generated by our example in option A, but there is a limitation applied to only collect events as alerts are triggered, not reset. Additional fields for the “AlertHistoryID” and the “AlertTriggerTime” in UTC (converted to Unix epoch in seconds to comply with the Events API format guidelines) are added as well.

To query data pulled using with Flex, use this NRQL:

SELECT timestamp, `swAlert.alertHistoryID`, `swAlert.alertTriggerTimeUTC`, `swAlert.alertName`, `swAlert.alertMessage`, `swAlert.alertingEntity`, `swAlert.relatedNode`, `swAlert.realEntityType` FROM solarwinds_alerts

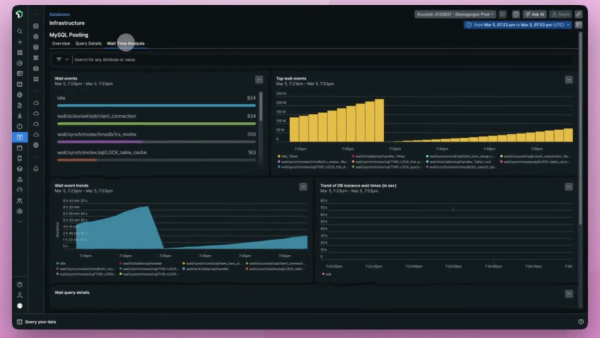

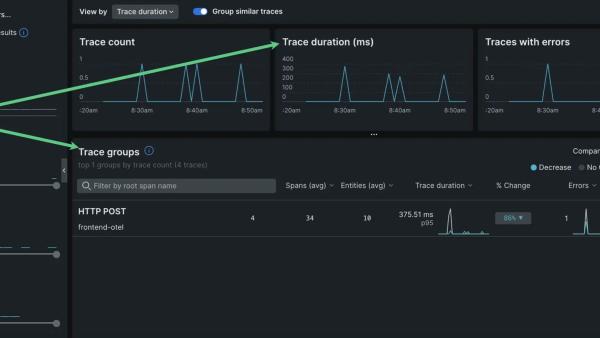

Of course, all of this data can be displayed in dashboards throughout New Relic One:

You can find the JSON payload for this dashboard in the public GitHub repository for the Flex Integration as well. You can quickly use this sample JSON, but be sure to edit OWNER_EMAIL_ADDRESS (line 13) and ACCOUNT_ID (line 122). You can apply the payload to your New Relic account using the API Explorer. Read more about “Visualizations as Code” or check out the Dashboard API documentation.

Gathering data from SolarWinds Network Performance Monitor

The other primary data point we want to collect in this post is network interface statistics sourced from SolarWinds Network Performance Monitor (NPM). Adding critical network telemetry to your digital user experience monitoring in New Relic closes the gap on the end-to-end requirement for your journey toward observability.

For this solution, I’m again going to leverage our Flex integration to query the data. I’ve also added an example YAML file to the public GitHub repository for you to use in your environments. The workflow for this integration will be identical to the one used in our alerts example above.

To query the data generated by our sample, use this query:

SELECT timestamp, `npm.observationTimestamp`, `npm.nodeID`, `npm.nodeName`, `npm.interfaceID`, `npm.interfaceName`, `npm.interfaceAlias`, `npm.interfaceStatus`, `npm.adminStatus`, `npm.trafficIntervalMins`, `npm.interfaceDataObsolete`, `npm.ifName`, `npm.ifIndex`, `npm.typeName`, `npm.typeDescription`, `npm.macAddress`, `npm.rcvBandwidthBps`, `npm.xmtBandwidthBps`, `npm.customBandwidthEnabled`, `npm.totalPercentUtil`, `npm.rcvPercentUtil`, `npm.xmtPercentUtil` FROM solarwinds_interfaces

Go forth and monitor OBSERVE!

Our commitment to developing the world’s first open, connected, and programmable observability platform is a testament to our ultimate desire to embrace the chaos you encounter and help you control it. That drive pushed Josh (author of part 1 of this series) and me to level up our monitoring strategies as customers and is now pushing us to evangelize the “Art of the Possible” as full-time Relics. It’s no longer enough to have a product that covers almost everything you need. You need a true platform that can consolidate all your data into a single point of truth where your teams can work together and reduce the time it takes to detect, respond, and resolve; and increase the time spent innovating the next big thing.

If you’d like to learn more, register for our upcoming webinar. Josh and I will be evangelizing the gospel of observability and maybe even sharing our favorite recipes for success.

The views expressed on this blog are those of the author and do not necessarily reflect the views of New Relic. Any solutions offered by the author are environment-specific and not part of the commercial solutions or support offered by New Relic. Please join us exclusively at the Explorers Hub (discuss.newrelic.com) for questions and support related to this blog post. This blog may contain links to content on third-party sites. By providing such links, New Relic does not adopt, guarantee, approve or endorse the information, views or products available on such sites.