Domino’s is a franchise business with more than 1,200 stores across the United Kingdom and Ireland, selling more than 114 million pizzas per year. From a technology standpoint, that scale of restaurants, customers, and pizza adds up to a complex challenge. Our customers can order pizza across three different channels: mobile app, web and online delivery platforms, like Just Eat. Franchise partners control several variables across those channels, from modifying prices to reducing their catchment area during peak times if needed.

As part of our Phoenix program of continuous improvements and in line with our modern engineering principles, we wanted to establish a site reliability engineering (SRE) capability across the technology stack. We took a unique approach to developing this capability. We embraced academic theories and used them to guide our transformation.

Adaptable architecture: Microservices

Traditional organizations typically have a standard, monolith application with an SQL server backing it. Customers bring up a web browser and transact with the backend to purchase a pizza. However; our microservice architecture means we have a decoupled front end that interfaces via a back-end to front-end architecture. An API Facade interfaces with a monolith and we have a set of microservices that are fronted by an API gateway.

Our shift from monolith to microservices established a foundation for testing new features in mirror modes, using real user traffic while significantly lowering the risk of errors and allowing new standards to be established for performance and reliability. Our team can test a new feature by putting an endpoint into mirror mode and observing that endpoint to ensure it’s operating as intended. We then use a canary testing pattern to bring the new feature live, beginning first by routing 10% of traffic through the updated system and then working our way up to 100%.

As we began to create a modern SRE capability, we knew our microservice architecture and API gateway would lend itself well to being a primary focus of our SRE engineers.

‘Anatomy of a capability’ approach to SRE

When we started the Phoenix program of continuous improvements, we wanted to recreate our core capabilities for incident response, turning them into reliability engineering. As we began planning to implement an SRE function, I spent time reading research and theories from academics about the capabilities themselves: What is the anatomy of a generic capability? What’s important as you try to implement a new capability in your organization?

I began with the work of Dorothy Leonard-Barton. In her journal, Wellsprings of Knowledge, she talks about the four dimensions of a capability: technical systems; skills; managerial systems; and a set of values that operate across the capability. I also read the work of David Teece, whose Dynamic Capabilities covers how a capability can be renewed and reimagined over time.

Drawing on this material, we decided to take an “anatomy of a capability” approach to SRE. We also called this the “capability pizza”—a pizza divided into six equal slices:

- Technical systems: Front-end and back-end service domains; the API gateway; New Relic; etc.

- Skills: Reliability architectures; problem analysis; distributed tracing

- Management systems: Manage and innovate the SRE system; incident management

- Values: Aware; bold; technical; collaborative and empathetic; data-driven

- Routine activities: Reliability engineering; monitoring setup

- Learning: Ability to transfer new technology and skills; ability to optimize the SRE system

In addition to the capability pizza, we also use a reconfigurable systems model to show the capability in action. Our developers are using a GitOps workflow and a set of environments to move the product from left to right in production. The SRE engineers are expected to look at a low-level design document, analyze reliability patterns, and then decide how to make the endpoints as reliable as possible.

Domino's UK SLO and budgets dashboard.

Monitoring and improving the system proactively

SRE is a data-driven exercise with specific commitments that need to be monitored all the time. Our engineers establish golden signals for latency, traffic, errors, and saturation. For each golden signal, we establish service-level objectives (SLOs) and error budgets. A good example of this approach would be availability: if you decide your server should have 95% availability, you have a 5% error budget. If you have 12 hours of requests coming through, 95% of those requests need to have been served successfully; if 3% failed, you have 97% availability and are still within your error budget.

With New Relic, we are also able to use synthetic monitoring to test our golden signals and model customer journeys across the site. One good example of this is when we brought live a modernized login process. Login is the perfect example of a customer journey: we look at the availability of the synthetic data and the duration it takes for a customer to run all the way through the process. If an endpoint is trending in the wrong direction, we can orchestrate a response that will get the system back on track. Once the system is performing well enough in the synthetic instance, we can begin canary testing to push it live into production.

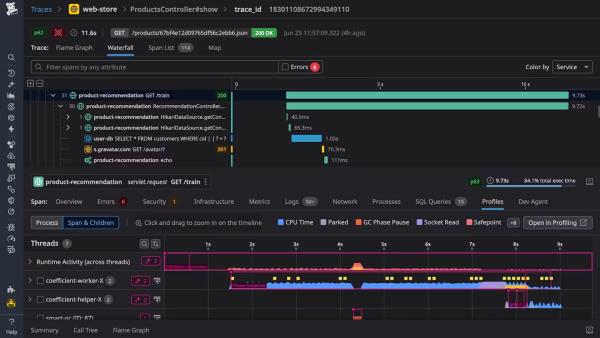

New Relic plays an essential role in allowing our SRE engineers to monitor and improve the system. We instrument applications across the architecture stack using application performance monitoring (APM), while distributed tracing gives us a clear visualization of how our components are working. Distributed tracing tracks and observes service requests as they flow through distributed systems, it is fundamental for SRE engineers to understand the ecosystem.

Our capability-focused approach to SRE and monitoring, allows our engineers to take a proactive approach to incident response. We’ve also embraced support preparation activities in advance of specific releases. The lead developer walks through the code with the SRE engineer, the SRE engineer can then train the service desk lead.

Domino's dashboard to show golden signals traffic.

Golden signals and error budgets allow us to significantly reduce risk in our production releases and modernize our change management process. When we introduce a new feature, we begin with a mirror and then use canary testing to introduce it to a live environment. We use error budgets to determine whether feature releases can use a standard pre-approved change management process to accelerate engineering delivery. If they haven’t exceeded their error budget, teams can perform as many standard changes as they want.

New Relic allows us to keep track of performance and ensure the error budget isn’t being breached. If the error budget is exceeded, the feature is then put through a slower, more traditional change management process. Our SRE managers will go into weekly engineering meetings and review the live endpoints using SLIs and SLOs. If any of them are trending in the wrong direction, we can take action and put them back on track.

Watch this presentation for a detailed walk-through on how Domino's UK created an SRE capability for their next-generation e-commerce ecosystem using an "anatomy of a capability" approach and a reconfigurable systems model.

The views expressed on this blog are those of the author and do not necessarily reflect the views of New Relic. Any solutions offered by the author are environment-specific and not part of the commercial solutions or support offered by New Relic. Please join us exclusively at the Explorers Hub (discuss.newrelic.com) for questions and support related to this blog post. This blog may contain links to content on third-party sites. By providing such links, New Relic does not adopt, guarantee, approve or endorse the information, views or products available on such sites.