In 2020, New Relic began migrating its telemetry data platform to AWS to accommodate explosive growth. At AWS re:Invent, Andrew Hartnett, Senior Director of Software Engineering at New Relic, shared how New Relic used a cell-based architecture to pave the way for long-term scalability and geographic expansion. To see the Unlocking scalability with cells: New Relic’s journey to AWS presentation, go to AWS re:Invent to watch on-demand content. You’ll learn more about the New Relic database (NRDB), how New Relic uses Kafka, and how New Relic successfully made the transition to AWS.

As Andrew discusses in the talk, “Our explosive growth led us to some serious scalability barriers. We found ourselves…with a monolithic single huge cluster in production running our entire business, over 100 engineering teams, 800 services, 1400 JVMs in the NRDB, all of this while ingesting 20 gigs per second. In 2018 and 2019, we had issues, some major outages."

New Relic uses Kafka to feed data through a pipeline composed of various services that aggregate, normalize, decorate, and transform data. Data can transit from a Kafka topic to a service 3 or 4 or 5 times before reaching its final destination.

As Andrew states, “We needed to scale up quickly. We had massive growth, coupled with increased demand for customers wanting their data led to those scalability barriers... Our Kafka was already one of the largest, if not the largest Kafka clusters in the world, and we were at the physical limitations of that Kafka cluster, so things were getting pretty hairy.”

To learn more about the transition, check out the presentation. Read on to discover what Andrew shared about key lessons learned for New Relic during the transition to AWS.

Observability is key

“It's really important to instrument every part of your system, including the system you're migrating from, the actual migration, and then the end system as well," Andrew advised.

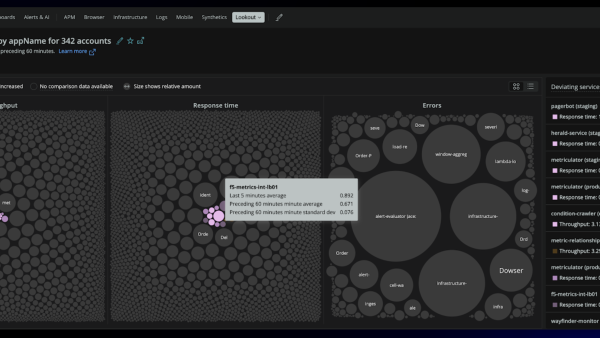

"All of our systems are fully instrumented. Observability hugely accelerated our ability to make large scale changes and migrations. We knew exactly where and when bottlenecks appeared, and rather than looking at low-level host metrics, our teams had visibility into the topology of our systems and could look closely at anomalies."

"To manage and mitigate the risk of such large system change events, applications, owners, and architects need to have the ability to see their system in high cardinality and high dimensionality.”

This chart depicts the data volume flowing into NRDB broken down by environment during the transition to AWS.

Don't plan for the happy path

“Things can change. Customers can do things to you. Even the best laid plans will have surprises and discoveries. Plan for these surprises and discoveries along the way," Andrew said.

"One key was to start small and then iterate. Stick your toe into the water first. Try with your own data. Because we are actually one of our own largest customers, sending our own data first made sense.”

Continuously build new cells

“We have a goal of having an average cell life of 90 days," Andrew shared. "By doing that, you get the ability to make your changes with new cells instead of having to do it with cells that are dealing with customer load. AWS provides all the APIs and managed services we need to continuously build and decomm cells. So we do it. As a result, our platform reliability has improved significantly, and we've reclaimed engineering capacity that was previously spent on infrastructure.”

Make sure you communicate

“Over communicating becomes very important. Don't keep your customers in the dark," Andrew recommended.

"It's important to let them know the reasons why we're doing all of this. We may experience some bumps, but this is going to get better.”

Next steps

Watch Andrew Hartnett’s presentation, Unlocking scalability with cells: New Relic’s journey to AWS at AWS re:Invent.

To learn more about the transition, read Transitioning to the cloud: New Relic's journey to AWS.

If you want to experience New Relic yourself, sign up for a forever free account.

The views expressed on this blog are those of the author and do not necessarily reflect the views of New Relic. Any solutions offered by the author are environment-specific and not part of the commercial solutions or support offered by New Relic. Please join us exclusively at the Explorers Hub (discuss.newrelic.com) for questions and support related to this blog post. This blog may contain links to content on third-party sites. By providing such links, New Relic does not adopt, guarantee, approve or endorse the information, views or products available on such sites.