One of the biggest challenges of application monitoring is striking a balance between gathering highly detailed data and the cost of storing that data. High cardinality data, which includes detailed attributes, can give you valuable insights into your data and help you find specific requests in your application, but that extra data comes with an added cost. In this post, you’ll learn how to manage high cardinality data with Prometheus and New Relic so you get the granular data you need while keeping your costs down.

What is cardinality?

Before we start discussing how to manage your dimensional metrics data, let’s define a bit of terminology. The term cardinality is used to define how detailed a timeseries is. Cardinality is important because it allows you to gain insight by increasing the granularity of your data. For example, if you are monitoring a distributed application it would be useful to have unique identifiers like hostname and region. Any unique combination of these attributes results in a single unit of cardinality.

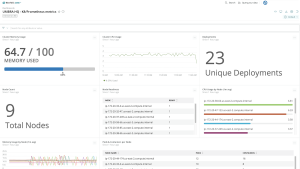

NRQL (New Relic Query Language) query showing cardinality distribution in New Relic.

However, cardinality can be a challenge to wrangle, especially when applying solutions like the Prometheus kubernetes_sd_config that require thoughtful configuration to strike a balance between useful cardinality and excess cardinality. For example, certain configurations in kubernetes_sd_config might expose a host IP address or a pod UUID, both highly unique and often not useful in day-to-day monitoring. Even though ingesting all of the metrics available allows you to quickly observe metric data in your systems, it can lead to high costs to ingest and store excess data.

When monitoring cloud-native environments, you’ll often have to deal with sudden spikes in cardinality. This happens when a metric with medium or lower cardinality suddenly transforms into a metric with high cardinality. This can happen for a variety of accidental reasons. For example, let’s say someone on your team adds the label user_id. Because Prometheus creates a new time series every time any label value changes, this can result in a huge amount of excess observability data.

Why is cardinality important in Prometheus monitoring?

High cardinality leads to increased resource consumption and performance issues in Prometheus. Understanding and managing cardinality is crucial for optimizing resource usage, ensuring efficient querying, and maintaining a healthy monitoring system.

How does high cardinality impact Prometheus performance?

High cardinality in Prometheus can have a significant impact on performance in several ways:

Increased memory usage:

- Each unique time series in Prometheus contributes to memory usage.

- High cardinality means a larger number of unique time series, which results in increased memory requirements for storing and managing these time series.

Storage requirements:

- High cardinality leads to more unique combinations of labels and label values, requiring additional storage space to persistently store these combinations.

- As the volume of time series data grows, storage requirements increase, potentially affecting disk space and long-term data retention.

Query latency:

- High cardinality can result in slower query performance.

- When querying for specific time series or aggregating data, Prometheus needs to process a larger number of time series, leading to longer query times.

Resource intensiveness:

- The computational load on Prometheus increases with high cardinality due to the need to manage and process a larger number of time series concurrently.

- This can strain CPU resources and impact the overall responsiveness of the Prometheus server.

Scalability challenges:

- High cardinality can pose challenges for horizontal scalability. As the number of time series and associated labels grows, it becomes more challenging to effectively scale Prometheus across multiple instances.

How to manage high cardinality data from Prometheus

The goal of this blog post is to show you how to use the Prometheus remote_write configuration to manage cardinality in your Prometheus servers. You’ll learn how to:

- Set up your scrape jobs for success.

- Check existing cardinality in a Prometheus server.

- Check how much cardinality a scrape job will have.

- Reduce cardinality from a Prometheus job.

To follow along, you’ll need to understand the basics of configuring a Prometheus server.

Use meaningful labels for your scrape jobs

When setting up your Prometheus scrape_config, you should give your jobs meaningful labels, starting with the job_name. That way, you can easily see where the majority of your monitoring load is coming from. You can also use the very flexible relabel_configs section of the configuration file, which gives you many options for changing labels to best fit your use case. You’ll see a few examples later in this post.

Reload your Prometheus configuration without restarting the server

You can also set up Prometheus to reload your configuration without a full server restart. This is especially helpful for debugging new Prometheus configurations. To do so, include the following command line option when you start the server:

--web.enable-lifecycle

The configuration can then be reloaded using the following API call:

curl -X POST localhost:9090/-/reload

If you’re using a different local host, you’ll need to update 9090 to the port you’re using.

Check existing cardinality in a Prometheus server

You can use the following PromQL query to check the cardinality based on various attributes:

topk(10, count by (job)({job=~".+"}))

When you run this query, Prometheus will display the current cardinality on the server segmented by the label job. If a job is generating significant cardinality, you can investigate the job itself with this query:

topk(10, count by (__name__)({job='my-example-job'}))

This query returns the cardinality of metric names (represented by __name__ in Prometheus) within the job my-example-job.

If you’re using Prometheus remote_write to send data to New Relic, you can see the cardinality of a job with the following New Relic Query Language (NRQL) query:

FROM Metric SELECT cardinality() FACET job, metricName SINCE today

Note that NRQL uses metricName instead of __name__.

View your most expensive jobs

To get quick insights on the most data-intensive timeseries in Prometheus, you can see the highest label counts, series counts, and more at http://localhost:9090/tsdb-status.

The most data-intensive labels and series counts in Prometheus

Using the previous image as an example, you can drill down into the label client_id by viewing its values at the URL http://localhost:9090/api/v1/label/client_id/values.

You can then see which jobs have a specific label with the following query:

topk(10, count by (job)({<label name>=~'.+'}))

After you have identified a label or job that is generating excess cardinality, you have several options for reducing or limiting the impact of that cardinality.

Omit scrape jobs from remote write

Note: If you are new to Prometheus remote write, see Set up your Prometheus remote write integration to send Prometheus data to your New Relic account.

If you don’t want to send data from a Prometheus job to your third-party vendor, you can update your scrape_configs to omit jobs. This can prevent an unexpected spike in cardinality data from being sent to your target data store.

For example, to prevent Prometheus from sending timeseries to your data store, you can add the following write_relabel_configs to your config. This example uses New Relic as the example remote_write URL.

...

remote_write:

- url: <https://metric-api.newrelic.com/prometheus/v1/write?prometheus_server=my-prom-server>

bearer_token: <your NR API key>

write_relabel_configs:

- action: 'drop'

source_labels: ['job']

regex: (job-to-omit)

...

This instructs Prometheus to drop any timeseries with a job value matching regex job-to-omit.

You can also specify which jobs to keep by using the 'keep' action.

write_relabel_configs:

- action: 'keep'

source_labels: ['job']

regex: (<other job names to keep>)

...Notice the change in action to keep. A keep action will drop any timeseries that does not contain a label with a value matching the regex. Either approach is valid but one could be easier to configure than the other.

You can verify the difference in cardinality data by querying your Prometheus server and your third-party vendor. Here’s the PromQL query again:

topk(10, count by (job)({job=~".+"}))

If you’re using New Relic, here’s the same query with NRQL:

FROM Metric SELECT cardinality() FACET job SINCE today

By comparing the two queries, you can ensure that the scrape job data isn’t being sent to New Relic. You can evaluate the cardinality of the newly added scrape job without it affecting any of your usage in New Relic.

Reduce the cardinality of a scrape job

Finally, you can reduce the cardinality of a job by dropping high cardinality labels or dropping unnecessary data points entirely. The choice depends on the level of detail you want to monitor. If you want to keep data, cardinality limits for an account can always be raised (at least with New Relic) but sometimes the additional cost isn’t worth it.

Drop labels

You can drop specific labels with labelkeep and labeldrop. These allow you to modify the fidelity and cardinality of the data you monitor. However, you must ensure that the resulting timeseries will remain uniquely identifiable. Otherwise, unique timeseries data could be combined, which is not what you want. For example, if you were to remove labels that uniquely identified two different entities, those entities would report conflicting information on metrics like memory and CPU, making it impossible to understand the state of either entity.

There are still situations where dropping labels might be useful. Here’s an example:

scrape_configs:

- job_name: 'api-service'

...

relabel_configs:

- action: labeldrop

regex: (ip_address)This config will target the label that matches the regex ip_address. In this example, removing the ip_address label does not affect the uniqueness of the timeseries but it might reduce cardinality if the IP address for the service is reassigned when the service is deployed or restarted.

Drop datapoints entirely

One solution for managing high cardinality is to limit what gets monitored by the scrape job in the first place.

Here’s an example of how to configure a Prometheus server that attempts to scrape metrics from all pods in a Kubernetes cluster. It will result in high cardinality because the pod name is being added as the label instance.

scrape_configs:

- job_name: 'k8s'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels:

- __meta_kubernetes_pod_name

action: replace

target_label: instanceYou can reduce the cardinality in the previous configuration by targeting the namespace. For instance, if you’re only interested in pod metrics in the namespace my-example-namespace, you can update the config to only monitor results in that namespace.

relabel_configs:

- source_labels:

- __meta_kubernetes_pod_name

action: replace

target_label: instance

- action: keep

source_labels:

- __meta_kubernetes_namespace

regex: (my-example-namespace)Note the additional keep action and regex added to relabel_configs.

Reduce cardinality by storing data for shorter periods

Let’s say you want the best of both worlds—high data fidelity without the cost of long-term storage. New Relic offers several options for managing high cardinality data. Datapoints and attributes can be dropped entirely or they can be dropped from long-term aggregates only. Dropping attributes from long-term aggregates allows you to view high cardinality data in real-time without incurring the high cardinality cost of long-term data storage.

Long-term aggregates allow metric data to be queried for a long period of time. At New Relic, we call this retention. You can find exact retention times for metric data at Retention details for assorted data types. If you opt to drop attributes from long-term aggregates, those attributes will not contribute to your daily cardinality limit but they will still be queryable for 30 days. This 30-day retention time is referenced in the documentation as raw dimensional metric data points.

To use New Relic drop rules, see Drop data using NerdGraph.

Next steps

Ready to start managing high cardinality data in New Relic? Jump into the docs at Set up your Prometheus remote write integration.

If you don't have a New Relic account yet, sign up for a free account. Your account includes 100 GB/month of free data ingest, one free full-access user, and unlimited free basic users.

The views expressed on this blog are those of the author and do not necessarily reflect the views of New Relic. Any solutions offered by the author are environment-specific and not part of the commercial solutions or support offered by New Relic. Please join us exclusively at the Explorers Hub (discuss.newrelic.com) for questions and support related to this blog post. This blog may contain links to content on third-party sites. By providing such links, New Relic does not adopt, guarantee, approve or endorse the information, views or products available on such sites.