Managing and monitoring logs is essential to ensuring the smooth operation of applications and services. While Azure is a powerful platform for your applications, it can be challenging to set up, and debugging using logs can be difficult. Logs in Azure can be scattered in multiple places and may come with issues: they can be expensive to store and may not provide enough visibility.

In this step-by-step guide, you'll learn how to capture logs from Azure using Azure Event Hubs and Azure Functions. You’ll send these logs to New Relic using our Logs API. By the end of this guide, you’ll have an end-to-end integration between Azure and New Relic, so you can monitor your logs easily and effectively.

Pre-requisites

What do you need to successfully follow along with this example? Here is a checklist:

- An active account on Microsoft Azure. If you don’t have one, you can sign up for free here.

- A New Relic ingest license key. Don’t have a New Relic account? Sign up for a free account that will give you full access to the 30+ capabilities available on our platform, and 100 GB of data per month for free.

Setting up Azure services

Let’s get started setting up our Azure services and configuring log forwarding to New Relic. You’ll be using the following three services to do this:

- AppService for Diagnostic logs

- Azure Event Hubs

- Azure Functions

In this example, you'll forward logs for a Node application hosted on App Service. Note that by default the diagnostic logs for app services are disabled on Azure, so you are required to manually enable diagnostic logs from each resource.

Service Configuration

1. Create an Azure Event Hubs namespace. Follow along with the video guide to learn how to create one.

2. After the successful deployment of the namespace, create an Azure Event Hub, using the instructions in the next video.

3. Next, enable diagnostic settings for your Azure resources. Diagnostic settings allow you to specify which categories of logs you want to capture and where to send them. You need to select the Event Hubs as the destination for your logs and provide the name of the namespace and the Event Hub you created in step one. Follow along with the video instructions.

4. Create shared access keys, as shown in the following video. For our Azure Functions App to communicate with the Event Hub to listen to incoming events, we need to create a shared access key with only Listen permissions.

5. Create an Azure Function app. List the app’s execution mode as Code and the runtime stack as Node.js, as shown in the next video. You can choose either Windows or Linux for your personal projects, but the runtime stack for this example should be Node.js, as the function logic will be written in JavaScript.

6. Creating a “New Connection Key” during function creation may lead to issues. Examples include the “Connection Key” not being added to the Function App during function creation or an invalid naming convention for the autogenerated access key name, which can cause the function to malfunction.

Don’t skip this step! If you accidentally create a “New Connection Key” during function creation, your key will not get automatically added to the Function App during function creation. Also, if the key name is autogenerated, the key name will have an invalid naming convention which can cause a function to misbehave or error out.

7. Add a function in the Function app container. Select the Azure Event Hub trigger as the trigger and type in the name of the Event Hub (not the namespace) that you created in step two. The Event Hub Connection should auto-populate with the NRLOGS_EVENTHUB_CONNECTION key as a connection option. This is the same key you just created in the previous step.

Note: the consumer group field is case-sensitive and should be left as $Default.

Before adding code into the function, create a secret named NR_INGEST_API_KEY for your New Relic license key in the Application setting at the Function’s app level.

8. Add code to the function. When the function is triggered, the Event Hub receives all the events, and these events are passed on as a parameter to the function. Copy the snippet below and add it to the function you created in the previous step.

const https = require("https");

// Configure the New Relic Log API http options for POST

const options = {

hostname: "log-api.newrelic.com",

port: 443,

path: "/log/v1",

method: "POST",

headers: {

"Content-Type": "application/json",

/* ADD YOUR NR INGEST LICENSE TO THE Application Settings of Functions App */

"Api-Key": process.env.NR_INGEST_API_KEY,

},

};

module.exports = function (context, eventHubMessages) {

const parsedMessages = JSON.parse(eventHubMessages);

try {

if (parsedMessages.hasOwnProperty("records")) {

parsedMessages.records.forEach(async (message, index) => {

/**

* capture only the console.log() of our app from the message

* ignoring all other metadata

*/

let logMessage = JSON.parse(message.resultDescription);

/**

* Setup the payload for New Relic with decoded message from EventHub

* with "message", "logtype" as attributes

*/

let logPayload = {

message: logMessage,

logtype: "MSAzure_AppServiceConsoleLogs",

};

let response = await SendToNR(logPayload);

console.log(

`Processed message ${message} with response => ${response}`

);

});

}

} catch (error) {

console.error(`POST to New Relic Failed ${error}`);

}

context.done();

};

function SendToNR(payload) {

return new Promise((resolve, reject) => {

const req = https.request(options, (res) => {

if (res.statusCode < 200 || res.statusCode > 299) {

return reject(new Error(`HTTP status code ${res.statusCode}`));

}

const body = [];

res.on("data", (chunk) => body.push(chunk));

res.on("end", () => {

const resString = Buffer.concat(body).toString();

resolve(resString);

});

});

req.on("error", (err) => {

reject(err);

});

req.on("timeout", () => {

req.destroy();

reject(new Error("Request time out"));

});

req.write(JSON.stringify(payload));

req.end();

});

}Notice how you can access log data through the eventHubMessages input parameter. eventHubMessages is an array of EventData objects that have properties like records. These records display the log content, like EnqueuedTimeUtc which shows the time the log was sent to the Event Hub.

Also note that the code creates a custom payload with a custom attribute, logType, to differentiate between sources of the logs.

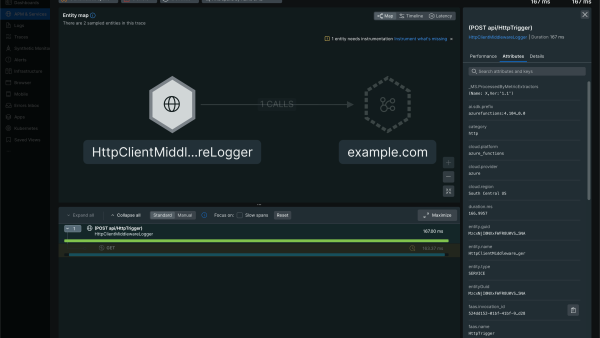

9. Save and deploy the function. After your function is deployed, wait for your application to generate some logs. To verify that logs were created and forwarded, look at the New Relic UI.

10. Verify that logs appear in New Relic UI. In New Relic, you’ll see the implementation is working and you can explore the forwarded logs. In the next image, notice how you can view the source of the logs in the logType attribute.

Troubleshooting

Some of the common issues you may run into while configuring this solution:

The function isn’t executing on Event Hub trigger. Verify that your function is configured properly with the Event Hub connection key and the correct Event Hub name is provided. Check and follow the instructions in step five of this blog. Also, ensure that your consumer group is set to $Default with the correct casing because this name is case-sensitive. If you have configured another consumer group for your Event Hub, please ensure the configuration is correct and revisit step five.

I’m unable to see the logs in New Relic. In some cases, it may take a few minutes before the data shows up. If you are still not seeing any data after waiting, make sure you have used the correct NR_INGEST_LICENSE.

Summary

Let’s review what you’ve learned in this blog, now that you’ve successfully made it through the example.

First, you enabled debugging in Azure by using the Diagnostic Logs feature. This gave you access to the detailed logs of your application.

Second, you set up Logs Streaming using Azure's native services. Once you could stream logs to a central location, it was easier to access and analyze logs across multiple Azure resources. This feature is handy for large-scale applications as it identifies trends and patterns in the data.

Finally, you established log forwarding to New Relic through the same native services. This helps you analyze logs in real-time, providing a faster turnaround time for detecting and fixing issues in our application.

Azure Diagnostic Logs, Logs Streaming, and log forwarding to New Relic can be used for all your Azure applications, making it a cost-effective and efficient way to manage your logs.

Next steps

- For more about the New Relic Log UI, read our documentation.

- Haven’t signed up for New Relic yet? Enjoy the benefits of enhanced log monitoring, and explore other capabilities of New Relic for free when you sign up.

- If you do not want to perform a manual setup, explore the Azure Native New Relic Service.

The views expressed on this blog are those of the author and do not necessarily reflect the views of New Relic. Any solutions offered by the author are environment-specific and not part of the commercial solutions or support offered by New Relic. Please join us exclusively at the Explorers Hub (discuss.newrelic.com) for questions and support related to this blog post. This blog may contain links to content on third-party sites. By providing such links, New Relic does not adopt, guarantee, approve or endorse the information, views or products available on such sites.