Most organizations struggle to explain how they measure MTTR, let alone whether their recent improvements actually moved the needle. Usually, it's because they're focused on the wrong part of the clock. Detection and resolution get the attention, but research popularized in The Visible Ops Handbook found that roughly 80% of MTTR is spent just identifying which change or component caused the outage in the first place.

Without the telemetry to pinpoint what changed and where, teams stay stuck in reactive firefighting. This guide gives technical leaders a blueprint for standardizing their metrics, mapping their incident stages, and using unified observability to cut the identification bottleneck that keeps systems down.

Key takeaways

- To improve MTTR, establish a consistent definition for “repair,” standardize timestamps, and keep that definition stable over time.

- Most MTTR is lost in a few stages of your incident lifecycle, so use data to find those stages instead of guessing where to optimize.

- Clear severity levels, ownership, and escalation paths cut decision time and confusion when everything is on fire.

- Runbook automation, solid on-call practices, and AI-powered anomaly detection reduce detection, diagnosis, and remediation time.

- Unified observability and incident management tools help you correlate telemetry, reduce context-switching, and support continuous MTTR improvement.

What is MTTR, and why does it matter for reliability?

Mean Time to Repair (MTTR) is the industry-standard metric for how long it takes to recover from an incident. In practice, what teams (and customers) actually care about is how long service is degraded, which is why the definition of "repair" varies across organizations.

Improving MTTR isn’t just about fixing incidents faster. It’s about reducing the time your systems and business operate in a degraded state. Uptime Institute’s 2025 analysis shows most outages cost at least $100,000, and the most severe incidents regularly exceed $1 million.

What does improving MTTR involve?

Reducing MTTR is about improving every stage of your incident response system.

MTTR spans multiple phases: detection, acknowledgment, diagnosis, mitigation, and validation. Most teams don’t have clear visibility into all these stages, making it difficult to identify where time is actually lost.

Improving MTTR means identifying friction across this lifecycle and systematically removing it, using data rather than assumptions.

How to calculate MTTR

Before you can improve MTTR, you need to define exactly what you’re measuring and make sure everyone calculates it the same way.

Step 1. Align on a definition

Historically, MTTR has stood for a variety of terms, all with slightly different implications:

- Mean Time to Repair: Time from failure start to when the underlying defect is fixed

- Mean Time to Recovery: Time from impact start to when service is back to an acceptable state (even if the root cause isn’t fully fixed)

- Mean Time to Resolution: Time from incident creation to when all follow-up tasks are completed and the ticket is closed

In practice, most teams—and this guide—use MTTR to mean "time from impact start to restored service," which technically aligns with Mean Time to Recovery. That's what customers feel, and it's the most actionable version of the metric. Whichever definition you pick, the critical thing is that every team in your organization calculates it the same way.

Step 2. Calculate it consistently

To make MTTR meaningful and comparable over time:

- Write down your MTTR definition in a runbook or reliability standards doc. Include start and end events (for example, “first user-impacting alert fired” to “error rate back under SLO for 15 minutes”).

- Standardize timestamps across tools. Ideally, your observability platform or incident management system becomes the source of truth for incident start, acknowledgment, mitigation, and repair times.

- Exclude noisy outliers deliberately. Decide how you’ll handle one-off incidents that skew your average times (for example, a test incident that wasn't properly closed).

- Track MTTR by key dimensions, such as service, severity, and time of day or week. A single overall MTTR isn’t enough to drive targeted improvement.

Unified observability platforms can streamline MTTR tracking by providing consistent incident markers based on telemetry and alerts—then you aren’t manually stitching together timestamps from three tools. When your alerts, logs, and traces live in one place, it’s much easier to define “start” and “end” in a way that’s repeatable.

How to identify MTTR bottlenecks in your incident lifecycle

Once you’ve defined MTTR consistently, the next step is understanding where that time actually goes. Response teams often assume they’re slow at diagnosis, only to discover the real problem is delayed detection or unclear ownership.

To see where you’re losing time, map your incident lifecycle end to end. A typical lifecycle looks something like this:

- Service degrades or fails

- Monitoring detects the issue and creates an alert

- On-call receives and acknowledges the alert

- Responders triage, gather context, and form a hypothesis

- Responders implement a mitigation or fix

- Service is restored and confirmed stable

- Post-incident review and follow-ups are completed

With separate systems for metrics, logs, paging, and ticketing, you often end up with gaps in this timeline. For example, you can see when the alert fired and when the incident ticket closed, but not how long responders spent bouncing between dashboards or when the first mitigation was applied.

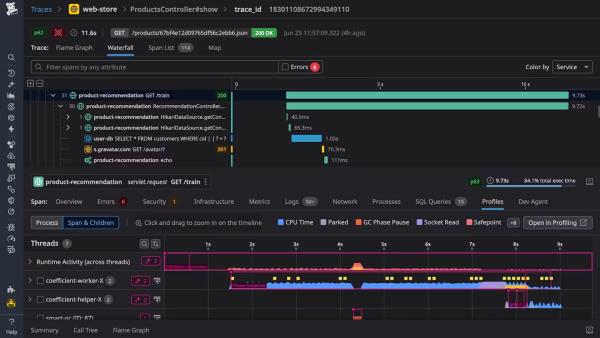

Unified telemetry platforms reduce those blind spots by correlating alerts with metrics, traces, and logs in one place, making it easier to reconstruct an incident timeline with real data instead of guesswork.

Baseline each stage of the incident lifecycle

To improve MTTR, you need to measure each lifecycle stage independently. To baseline each stage, track five key timestamps per incident:

- Impact start: When users or SLOs were affected

- Alert acknowledged: When someone took ownership

- First mitigation applied: Rollback, feature flag, or scale-up

- Service restored: When health indicators stabilized

- Postmortem completed: When follow-ups were documented

Use your incident management and observability tooling to consistently capture these points, then calculate the durations for:

- Detection

- Acknowledgment

- Diagnosis

- Mitigation

- Stabilization

- Learning time

Build dashboards that show median and percentile values for each stage, broken down by severity and service. Patterns will emerge quickly, such as Sev1 incidents with fast acknowledgment but slow mitigation or a specific service consistently experiencing long detection times. Once you've baselined these stages, you know exactly where to focus.

6 incident management strategies to improve MTTR fast

With a baseline in place, you can focus on changes that address your real bottlenecks instead of following generic best practices.

1. Define clear severity levels and escalation paths

When something breaks, you need to know how bad it is and who needs to be involved. You can answer these in advance with a simple severity model that includes business impact and response expectations:

- Sev1: Broad customer impact or critical revenue path broken; target: 5-minute acknowledgment, 30-minute mitigation

- Sev2: Significant degradation with workarounds; target: 15-minute acknowledgment, 2-hour mitigation

- Sev3/4: Limited or internal impact, handled during business hours

Pair each severity level with predefined escalation paths: who serves as the Incident Commander, which team members are paged immediately, and when to involve leadership. Your incident management system should encode these rules so the right people are notified automatically, shaving minutes off acknowledgment and triage time.

2. Implement runbook automation for common incidents

Manual playbooks for recurring issues, like:

- Cache problems

- Disk alerts

- Stuck deployments

- Burn time

- Increase variability

Start by identifying your most frequent incident types, then document diagnostics, mitigation options, and common commands.

Next, automate the repetitive pieces: turn commands into scripts, integrate runbooks with ChatOps tools, and use approvals for higher-risk actions. Over time, this becomes runbook automation that can restart services, roll back deployments, or toggle feature flags with a single action—directly reducing diagnosis and mitigation time.

3. Establish clear incident ownership and communication protocols

Incidents drag on when nobody's clearly in charge or communication gets chaotic. Define a lightweight incident command structure with, at minimum, an Incident Commander (decision-making and coordination), primary responders (technical investigation), and a communications lead for larger disruptions.

Combine that with simple protocols:

- Dedicated incident channels for Sev2+

- Consistent status update templates

- Logging decisions directly in the incident record

Integrated incident management and observability tools reduce context-switching and shorten decision cycles.

4. Optimize on-call readiness and handoff procedures

Perfect dashboards and runbooks don't help if the person on call can't use them effectively. Improve on-call effectiveness by training for incidents through regular game days, standardizing handoffs with written summaries of active risks, right-sizing rotations to prevent burnout, and documenting clear expectations around response time and escalation.

Integrating on-call schedules with your observability and incident tooling ensures alerts reach the right person every time, significantly reducing acknowledgment and triage times.

5. Leverage AI-powered anomaly detection to reduce detection time

Static alert thresholds are either too noisy or too quiet. AI-powered anomaly detection learns normal behavior and surfaces meaningful deviations. Modern observability platforms like New Relic offer AI capabilities that:

- Detect unusual patterns in latency, error rate, and throughput

- Correlate anomalies across services, infrastructure, logs, and traces

- Group related alerts to reduce noise

When anomalies surface with context like linked dashboards, relevant logs, and recent deployments, you reduce both detection and diagnosis time.

6. Conduct blameless postmortems with actionable follow-through

Every incident you don't learn from is wasted MTTR. Effective postmortems should include:

- A factual timeline

- Analysis of what slowed detection or remediation

- Identification of missing guardrails or observability signals

- Specific action items with owners and due dates

Keep the focus on systems and processes, then track follow-up completion and review whether they actually improved MTTR.

Observability and monitoring tools that automatically capture timelines, link to telemetry, and provide templates make it easier to run consistent postmortems and embed learning into your workflow.

Which tools should you use to improve MTTR?

Processes and culture matter, but your tools determine how effectively your team can execute under pressure. You don’t need a specific vendor to improve MTTR—you need the right platform and capabilities for your team. Use the following evaluation checklist when assessing your current stack or considering new platforms.

Observability platforms

The right observability platform eliminates the chaos of tool-hopping by giving you a single, unified view to pinpoint exactly which dependencies are misbehaving so you can diagnose faster, act with confidence, and restore service without wasting critical seconds switching contexts.

Key features include:

- Unified telemetry: Consolidate metrics, logs, and traces in a single view to diagnose issues without switching between tools or losing critical context.

- Contextual navigation: Jump directly from an alert to the specific service, dashboard, or trace that's causing the problem, eliminating manual hunting and reducing diagnosis time.

- Service maps: See how services connect and which downstream dependencies are affected, so you can quickly assess impact scope and prioritize remediation efforts.

- AI-powered anomaly detection: Reduce alert noise by clustering correlated signals into a single incident, helping you focus on the root cause instead of triaging dozens of redundant notifications.

Platforms like New Relic consolidate these capabilities to reduce context-switching during high-pressure incidents.

Incident management systems

Incident management tools coordinate the human response by ensuring the right people know about the right problems—fast.

Key features include:

- On-call scheduling with escalation policies: Ensure alerts reach the right person at the right time, eliminating delays caused by manual routing or missed notifications.

- Alert deduplication and grouping: Reduce noise by consolidating related alerts into single incidents, so responders can focus on incident resolution instead of troubleshooting duplicates.

- Chat and collaboration integrations: Centralize incident communication in the tools your team already uses, reducing context switching and keeping everyone aligned.

- Automated post-incident reporting: Capture timelines and key data automatically, making it easier to run consistent postmortems and track follow-through without manual effort.

Strong integration between your observability and incident management systems means alerts become incidents with rich context, so responders can start diagnosing immediately instead of hunting for data.

Automation and remediation tools

The right automation tools eliminate manual toil during incidents, reduce human error under pressure, and let your team focus on complex decisions instead of repetitive tasks.

Key features include:

- Runbook automation and workflow engines: Execute common remediation tasks automatically with built-in safety checks, reducing manual toil and human error during high-pressure incidents.

- Safe rollback mechanisms: Integrate with your CI/CD pipelines to quickly revert problematic deployments, minimizing the time between detection and restoration.

- Feature flag systems: Disable problematic functionality instantly without requiring a full deployment, giving you a fast mitigation path when new features cause issues.

- ChatOps integrations: Expose common operations as simple commands in your team's chat tools, reducing context switching and making remediation actions accessible to all responders.

When wired into your observability and incident management stack, these tools reduce handoffs and manual work, directly lowering MTTR.

Start improving MTTR with data-driven incident response

Improving MTTR means systematically tightening every stage of your incident lifecycle. Start by defining MTTR clearly, baselining each stage with real data, and focusing on the changes that remove the most friction.

From there, use tooling that supports data-driven incident response. A unified observability platform like New Relic gives teams consistent visibility, correlated telemetry, and AI-powered detection—so you can understand incidents faster and resolve them with confidence.

Request a demo to explore how unified observability can support your MTTR and system reliability goals.

FAQs about how to improve MTTR

What is a good MTTR for my team or service?

A “good” MTTR is one that aligns with your business impact and reliability goals. Start by setting MTTR targets per severity level and critical service. For customer-facing, revenue-critical paths, you may aim for minutes, while internal tools might tolerate longer recovery times with less operational overhead.

What's the difference between MTTR, MTTD, and MTBF?

MTTR measures how long it takes you to recover from an incident once it starts. MTTD (Mean Time to Detect) measures how long it takes to notice the problem. MTBF (Mean Time Between Failures) measures how often failures occur. Together, they describe reliability, detection, and response performance.

Should we track MTTR by incident severity or by service?

You should track MTTR by both severity and service because each view answers different questions. By severity, you see how quickly you recover from high-impact events. By service, you find specific systems that are slow to restore. Combining both views gives you clearer, more actionable improvement targets.

The views expressed on this blog are those of the author and do not necessarily reflect the views of New Relic. Any solutions offered by the author are environment-specific and not part of the commercial solutions or support offered by New Relic. Please join us exclusively at the Explorers Hub (discuss.newrelic.com) for questions and support related to this blog post. This blog may contain links to content on third-party sites. By providing such links, New Relic does not adopt, guarantee, approve or endorse the information, views or products available on such sites.