Snowflake to Apache Iceberg: How we migrated 1,000+ datasets and cut our data costs at New Relic

At New Relic, we run one of the largest observability platforms in the world. That means our internal data platform isn't a side concern, it's infrastructure we depend on to operate at scale, every day. For a long time, Snowflake was a core part of that platform. Then the economics stopped working. As our data platform grew, two things became impossible to ignore: the cost curve was moving in the wrong direction, and we were deeply locked into a single vendor's storage, compute, and query model. So we made the decision to migrate to an open, cloud-native architecture and decouple every layer. But decoupling every layer and migrating a production data stack is a high-stakes operation. No engineering team can afford to "fly blind" when moving 1,000+ critical datasets.

A migration of this scale only works if you have complete visibility across every pipeline and cluster, from your infrastructure health to the row-level integrity of your data. We turned to our own observability tools to ensure that visibility.

By instrumenting the whole process end-to-end, we were able to move fast without losing visibility.

The result: we migrated over 1,000 datasets; batch and streaming; without disrupting product delivery, and achieved a 35-52% reduction in our annual data platform spend. More importantly, we built a platform who can now scale efficiently without the costs ballooning up. This blog is the story of how we achieved those results.

Why we moved on from Snowflake

Snowflake had served us well early on. It let us move fast, iterate quickly, and skip a lot of infrastructure complexity in our earlier days. But as our platform scaled, two structural problems became impossible to ignore.

The first was the cost curve. Managed data warehouses bundle storage, compute, and query into a single model, which means you pay for the package whether you need all of it or not. As our data volumes grew, costs didn't scale proportionally. Every new product launch or increase in event volume triggered step-function cost jumps. There's a reason engineers have started calling it the Snowflake Tax; the more your data grows, the more you pay, with no way to decouple the cost from the volume.

The second was lock-in. With storage, compute, and query tightly coupled to one vendor, we had no clean way to adopt a different compute engine, integrate open ML tooling, or ensure our data was portable. Any architectural change meant going through Snowflake. That constraint was manageable early on. At scale, it started limiting what we could build.

Our goals going into the migration were explicit: decouple storage from compute, lower both steady-state and marginal costs, maintain performance and reliability, and gain deep observability across the full data lifecycle.

The architecture we built

As mentioned earlier, the guiding principle behind our new stack was clean separation of storage, compute, and query so that each layer can scale independently. Here's how we structured it:

Storage & catalog

We chose Apache Iceberg as our open table format because it gave us everything we relied on Snowflake to provide; ACID transactions, schema evolution, time travel; but without the vendor dependency. Our data is stored in an open format that any compatible engine can read.

Furthermore, we use Amazon S3 with AWS Glue for the underlying storage and catalog. This brought our storage costs down to raw object storage economics, with a catalog we fully control.

Compute

We run Apache Spark on Kubernetes for both batch and streaming workloads. Kubernetes means compute scales with actual demand and costs are driven by real job execution, not pre-purchased warehouse credits.

Orchestration

We orchestrate pipelines with Apache Airflow on Kubernetes. This gives us full ownership of the orchestration layer and complete visibility into every DAG run, retry, and execution time.

Streaming

We use Apache Kafka and Kafka Connect to write streaming data directly to Iceberg. This eliminated the intermediary translation step we previously needed, reducing both latency and cost in our streaming pipelines.

Observability

Without question, we instrumented New Relic across the entire stack from day one. We understand that observability is a foundational layer, not an afterthought.

The economics of this architecture are fundamentally different from a managed warehouse. Storage runs at raw S3 rates. Compute is driven by actual Spark execution. But an architecture is only as good as your ability to operate it, and migrating to it safely at our scale is where the real work began.

How we migrated 1,000+ datasets without breaking anything

The scope was significant: over 1,000 datasets spanning batch and streaming pipelines, high-volume product telemetry, downstream analytics, and critical business datasets with strict SLAs. A big-bang cutover wasn't a strategy we were willing to consider.

Instead, we ran Snowflake and Iceberg in parallel through the transition, following three steps:

- Parallel operation: We stood up Iceberg pipelines alongside existing Snowflake pipelines, writing to both simultaneously.

- Row-level parity validation: Before shifting any consumers to Iceberg, we validated that data matched at the row level. This wasn't sampling. It was a hard requirement before any pipeline was considered ready for cutover.

- Incremental consumer migration: Once parity was confirmed for a dataset, consumers moved incrementally rather than all at once. This kept the blast radius small and preserved clean rollback paths throughout.

Running two systems in parallel creates its own operational challenge: you now have twice the infrastructure to watch, twice as many pipelines that can fail, and twice the surface area where a problem can hide. At New Relic, we understand better than anyone that this is exactly the kind of complexity observability exists to tame, so we made sure we had full visibility across both systems every step of the way.

Observability as a migration strategy

At New Relic, full-stack observability isn't something we bolt on, it's how we operate. So when we took on a migration of this size, instrumenting every layer of the stack with New Relic was never a question. It's just how we work.

We instrumented across three layers:

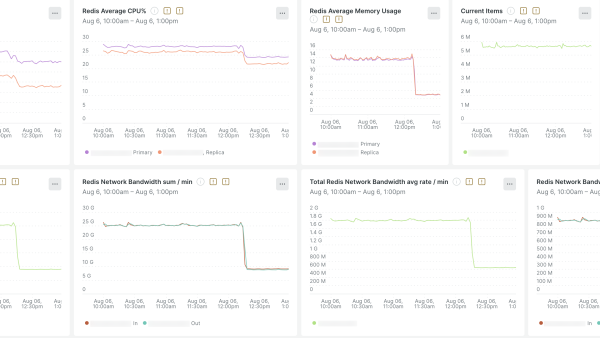

- Infrastructure visibility: We monitored Kubernetes cluster health, node and pod resource usage, and Spark executor and driver performance throughout. Spark jobs under memory pressure or shuffle bottlenecks usually degrade silently instead of a loud failure. Having executor metrics in New Relic meant we caught those conditions early and tuned jobs before they became incidents.

- Data pipeline visibility: Airflow DAG execution, retries, and latency gave us a live view of which pipelines were healthy during each cutover window. Kafka throughput, consumer lag, and connector health told us whether streaming pipelines were keeping up. The teams watched these metrics actively during every migration window.

- Data reliability visibility: This was the layer that gave us the confidence to actually decommission Snowflake pipelines. We tracked SLAs and data freshness for every Iceberg dataset, with failure detection tied directly to on-call alerts. When something went wrong, we could trace it from the symptom back to the root cause quickly, whether that was a Spark memory spike, a Kafka consumer lag event, or a node eviction.

The result was a single unified view from infrastructure health to data correctness. Engineers didn't need to context-switch between cloud consoles, Kubernetes dashboards, and pipeline tools to understand what was happening. And that clarity is what let us move at the pace we did without compromising reliability.

What we achieved

The most immediate outcome was cost. We reduced our annual Snowflake spend by 35-52% by shifting from proprietary pricing to utility-based cloud infrastructure. Storage now runs at raw object storage economics. Compute scales with actual job execution. New products and increased data volume no longer trigger step-function cost increases, and the marginal cost of growth dropped materially.

Beyond cost, there were two outcomes that stood out. Our data is now fully open and portable. It lives in a format any compatible engine can read, on infrastructure we control. We can adopt new compute engines, ML tooling, or any Iceberg-compatible query layer without re-platforming. We're not tied to anyone's roadmap or pricing decisions. Most importantly, we now have a platform that scales in our favor. Every new dataset, every new product, every increase in event volume costs less than the one before it. That's the real test of whether a data platform is working for you or against you, and it's the one we're now confident we're passing.

Top takeaways

Migrating off a managed data warehouse at this scale isn't a small undertaking, but the patterns we followed apply broadly. Here's what we'd want anyone considering a similar move to keep in mind:

- The cost curve matters more than the current bill. When your data platform costs scale faster than your product growth, the problem compounds quietly. The right time to act is before it becomes a crisis.

- Open standards are an effective cost strategy. Furthermore, decoupling storage, compute, and query gives you the ability to optimize each layer independently. That flexibility has a direct dollar value at scale.

- When migrating, run both the stack in parallel and migrate incrementally. A big-bang cutover is a gamble at best. Parallel operation with row-level parity validation and incremental consumer migration is the only approach that keeps your options open at every step.

- Observability makes a complex migration safe. Full visibility across infrastructure, pipelines, and data correctness is more than just a nice-to-have during a migration of this scale. It's what keeps the migration on track.

次のステップ

Whether you're managing a growing data platform, planning a migration off a managed warehouse, or simply trying to understand what's happening across a complex data stack, observability is what gives you the confidence to move fast without breaking things. Explore New Relic's Infrastructure Monitoring and Kubernetes Monitoring to build similar visibility into your own data platform.

本ブログに掲載されている見解は著者に所属するものであり、必ずしも New Relic 株式会社の公式見解であるわけではありません。また、本ブログには、外部サイトにアクセスするリンクが含まれる場合があります。それらリンク先の内容について、New Relic がいかなる保証も提供することはありません。