One of the best parts of my job is the opportunity to work with talented engineering and data science teams that are at the forefront of AI innovation. As IDC forecasts that enterprises will collectively run more than one billion AI agents by 2029, the need to build and deploy these agents at scale with intelligent observability is even more important. This is the story of one internal team that faced this scaling challenge head-on, transitioning from manual debugging to integrated AI observability for their production agents.

As our Customer 0, New Relic’s internal engineering and data science team responsible for our SRE agent and our agentic platform has been using New Relic AI Monitoring (AIM) and AI Agent Monitoring to help them understand and optimize both the cost and performance of AI agents within their environment.

The Before: Manual Telemetry and Open-Source Fragmentation

Before adopting AIM, the team tracked their agents’ health manually. Engineers had to write custom code to bake telemetry directly into every service to capture token counts and model identifiers, which was inefficient and time consuming. The debugging process was long and manual.

The After: Integrated Observability and Rapid Onboarding

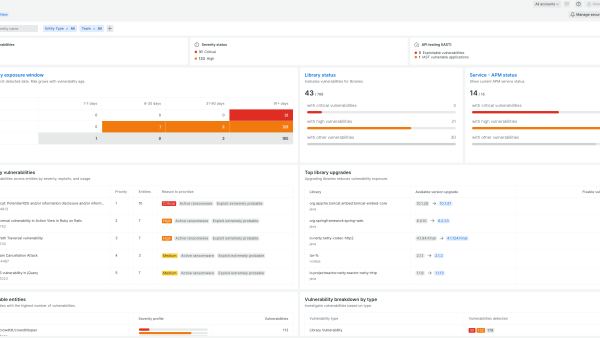

By adopting AIM, the New Relic engineering team simplified their operations with a smooth and quick onboarding process that required only a minor configuration change to our APM Python agent. They are using AIM extensively, in particular, when they are making updates to the SRE agent and observing its performance. Below are a few AIM use cases the team found very useful in their day to day work:

- Model uses and performance. When moving from one model to another (e.g., GPT-4 to Claude), the team uses the model inventory to test the change in staging, comparing impact on response times and token usage before pushing to production.

- Cost optimization. The team monitors token usage averages and P95 to ensure they are staying within context limitations and budget, especially when deploying high-cost models.

- Debugging: The team uses AIM and AI Agent Monitoring to understand if the agents are performing as expected with the correct tool calls. They also look at agent-to-agent communication to ensure interactions occur without errors.

Building and Deploying AI Agents with Intelligent Observability

The adoption of AIM allowed the engineering team to deliver better agentic AI solutions faster.

The impact of adopting AIM is significant; it helps the team optimize their agents’ performance and deliver cost-efficient solutions for our customers. Here are the key benefits New Relic’s engineering team receives:

- Faster development with Out-of-the-Box LLM metrics. Automated tracking replaced manual code, instantly providing data on token usage, response times, and error rates.

- Optimized Cost. Automated visibility into token usage allows the team and engineering leadership to manage and justify the spend associated with different LLM providers.

- Continuous Observability. AIM runs continuously across staging and production environments. It provides a historical perspective rather than just point-in-time snapshots which helps with debugging and code and cost optimization.

Through the power of the New Relic platform and our AI observability, the team is moving away from building custom tools and back to what they do best: building the next generation of AI agents. To try New Relic and our AI Observability solution, sign up today.

IDC Directions: The AI Supercycle: Where the Next Trillion in Tech Value Will Be Created, April 2026

本ブログに掲載されている見解は著者に所属するものであり、必ずしも New Relic 株式会社の公式見解であるわけではありません。また、本ブログには、外部サイトにアクセスするリンクが含まれる場合があります。それらリンク先の内容について、New Relic がいかなる保証も提供することはありません。