Your cloud environment produces more telemetry than any human (or team) can reasonably keep in their mind. Metrics from managed services, logs from microservices, traces across regions, events from CI/CD and feature flags… it’s a lot. Without a clear set of cloud monitoring best practices, you end up chasing symptoms, flipping between tools, and arguing about whose dashboard is “right” instead of understanding what’s actually happening in your systems.

Effective cloud monitoring isn’t about collecting more data or building prettier dashboards. It’s about unifying telemetry, cutting noise, and giving every engineer the context to make fast, confident decisions—especially when failures span services, regions, and teams.

Key takeaways

- Design monitoring around how you debug and operate systems, not around static dashboards.

- Prioritize a small set of actionable signals tied to user impact and business outcomes.

- Correlate metrics, logs, traces, and events in one place so you can follow a problem end to end.

- Use dynamic, context-aware alerting to reduce noise and focus attention where it matters.

- Standardize telemetry and practices so teams can collaborate effectively during incidents.

6 cloud monitoring best practices for reliable, unified observability

In cloud-native, distributed systems, issues rarely stay neatly contained in one service or region. A slow dependency in one account can surface as a spike in frontend errors in another. To handle that reality, you need cloud monitoring best practices that support cross-service, cross-team collaboration—not just more graphs.

The practices below are focused on reducing alert fatigue, eliminating data silos, and turning reactive firefighting into faster, more confident root cause analysis.

1. Design monitoring around engineer workflows, not dashboards

If you start with dashboards, you’ll end up with monitoring that looks impressive but doesn’t help you under pressure. Start instead with the workflows engineers follow when something is wrong.

Work backward from real scenarios:

- During an incident: What’s the first question you ask? “Is this user-impacting?” “Which services are involved?” “Did we deploy recently?” Make sure you can answer those questions in one or two clicks.

- During performance tuning: How do you identify the slowest service or endpoint? Where do you see its dependencies and their health?

- During change management: How do you compare system behavior before and after a deploy or feature flag change?

Then design your observability views around those flows:

- Service maps that let you jump from an affected endpoint to its dependencies and their telemetry

- Incident views that combine related alerts, recent changes, and a shared timeline

- Built-in “from alert to trace to logs” paths so on-call engineers don’t waste minutes finding the right tool

When Kmart modernized its digital platforms, for example, it aligned New Relic dashboards and service maps with how its teams actually debugged checkout issues. That reduced the friction between “an alert fired” and “the right engineer sees the right context.”

2. Prioritize actionable signals over raw data volume

Just because you can collect everything doesn’t mean you should. In cloud environments, oversampling and over-instrumenting without a plan leads to high costs and low signal-to-noise.

Focus first on a concise set of signals tied to user impact and reliability objectives:

- Service-level objectives (SLOs): Define availability and latency targets for your most critical user journeys (e.g., “checkout completes within 2s, 99.5% of the time”).

- Golden signals: Latency, traffic, errors, and saturation for each key service and edge boundary.

- Business KPIs: Conversion rate, successful API calls, or orders per minute to keep technical metrics anchored to actual outcomes.

Once these foundations are in place, expand collection where it helps explain or predict issues—such as detailed application metrics, queue depths, or cache hit rates. Use sampling and retention policies to keep cost and complexity under control.

Teams at Skyscanner, for instance, started by focusing on a limited set of service health and business metrics in New Relic to understand what “healthy” looked like across hundreds of services. Only after that baseline was solid did they expand to more detailed telemetry for deeper optimization.

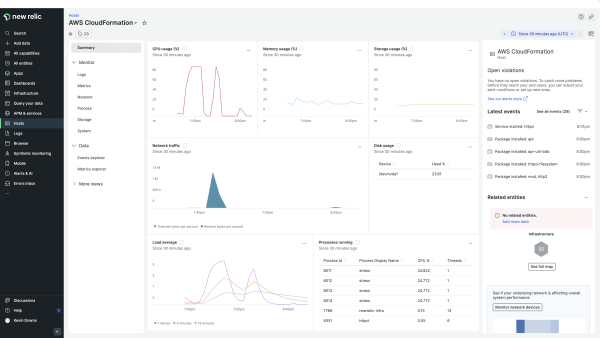

3. Correlate metrics, logs, traces, and events in shared context

You don’t troubleshoot systems in separate silos, so your telemetry shouldn’t live there either. To make sense of distributed failures, you need to correlate metrics, logs, traces, and events in a shared context.

That starts with consistent attributes, such as:

- Service and team ownership (e.g.,

service.name, team). - Deployment details (e.g.,

version, commit_sha, deployment_id). - Environment and region (e.g.,

env, region, cluster). - User or tenant identifiers where appropriate (e.g.,

customer_tier, account_id).

With that in place, you can:

- Start from a latency spike, pivot into the corresponding trace, then open the exact log lines for the failing span.

- Filter everything (metrics, logs, traces) to “production, EU region, premium customers” when you see a localized incident.

- Overlay deployment events on your dashboards to see whether issues correlate with recent changes.

This is where a unified observability platform like New Relic is useful: you ingest all telemetry types into one data platform, apply a consistent schema, and query across them using a single language. You spend less time stitching evidence together and more time understanding the underlying behavior.

4. Use dynamic alerting to reduce noise and fatigue

Static thresholds baked into dashboards might be enough for simple systems, but they don’t hold up in dynamic cloud environments with daily traffic patterns, autoscaling, and frequent releases. If every blip becomes an alert, on-call engineers quickly learn to ignore them.

To keep alerts meaningful:

- Alert on symptoms that matter, not every deviation. Focus on user-impacting conditions (error rate, high latency on key paths, SLO burn) instead of low-level resource metrics alone.

- Use baselines and anomalies. Let your monitoring system learn “normal” for a metric and alert when behavior deviates significantly, not when it crosses an arbitrary static line.

- Combine conditions. Alert only when multiple related signals trigger, e.g., high error rate and increased latency and traffic is above a certain threshold.

- Route alerts intelligently. Use ownership metadata and routing rules so the right team gets notified, with clear context and runbooks attached.

Platforms like New Relic support dynamic baselining, multi-condition policies, and event correlation so you see a smaller number of richer, more contextualized incidents instead of dozens of disconnected alerts.

5. Monitor end-to-end service paths, not isolated components

Users don’t care which service is slow. They care that “search is broken” or “checkout is timing out.” If you only monitor individual components, you’ll miss how issues propagate across the full path.

Map and monitor the key flows that matter to your business:

- User journeys: Sign-up, login, search, checkout, payment, content load, etc.

- Critical API flows: Partner integrations, payment providers, mobile app backends.

- Background jobs: Billing runs, data pipelines, async processing that affect user experience indirectly.

For each of these, you want:

- End-to-end latency and error rate, not just per-service metrics.

- Distributed traces that show every hop and where time is spent.

- Synthetic tests that exercise these paths from key regions and networks.

New Relic’s distributed tracing and synthetic monitoring are built for exactly this: you can trace a single request across microservices, queues, and external dependencies, then back that up with scheduled, scripted checks that run the same flows users do. That combination makes it much easier to reason about cascading failures and fragile links in your architecture.

6. Standardize telemetry to support collaboration across teams

In a growing organization, each team inventing its own way of naming metrics, tagging services, and structuring logs is a recipe for confusion. During an incident, that chaos becomes a tax on every engineer involved.

Standardization doesn’t need to be heavy-handed, but you do need shared conventions.

For example:

- A common tagging strategy across all telemetry (service, team, environment, region, application tier).

- Consistent metric and attribute naming (e.g., http.server.duration, db.query.duration, queue.depth).

- Log formats that always include correlation IDs and key identifiers.

- Service catalogs that map services to owning teams, SLOs, and runbooks.

Using open standards like OpenTelemetry helps here, because you can define schema and attributes once and apply them consistently across languages and frameworks. New Relic supports ingesting OpenTelemetry data directly into its platform, so your investment in standardization carries across tools and stacks.

The payoff is obvious during incidents: when everyone speaks the same observability “language,” you waste less time translating and more time fixing.

The real cost of poor cloud monitoring

When monitoring is fragmented, the impact goes far beyond a few extra minutes of debugging. You pay for it in slower response, misdiagnosed incidents, and higher operational overhead.

Common failure modes include:

- Delayed detection: You find out about issues from customers or support tickets because telemetry is scattered across tools and no one has a clear view of user impact.

- Tool hopping during incidents: Engineers bounce between logs, metrics, tracing UIs, and CI/CD systems, manually correlating timestamps and IDs instead of seeing a unified timeline.

- Misdiagnosis and rework: Without reliable, correlated data, teams chase red herrings—restarting healthy services or rolling back unrelated changes—before discovering the real root cause.

- Overprovisioning and waste: When you lack visibility into capacity, cache effectiveness, or queue behavior, you tend to solve performance issues by throwing infrastructure at the problem.

- Engineer burnout: Constant low-value alerts, midnight tool-hunting, and unclear ownership eventually push your best people away from on-call or, worse, out of the organization.

All of this shows up as higher downtime, degraded user experience, and slower delivery. Clear, unified observability flips that script: you spot issues earlier, involve the right people faster, and rely on data instead of guesswork.

Which metrics and data sources should you monitor in cloud environments?

When you think about cloud monitoring best practices, it’s useful to start from the four pillars of observability: metrics, logs, traces, and events. Each plays a different role in understanding failure modes in cloud-native architectures.

Here’s how to think about what to collect at each layer.

Metrics: high-level health and trends

- Infrastructure: CPU, memory, disk I/O, network throughput, and saturation for compute (VMs, containers, serverless functions). Cloud provider managed services (databases, load balancers, caches) also expose critical metrics.

- Applications: Request rate, latency, error rate, queue depths, retry counts, cache hit ratios, thread pool usage.

- User experience: Page load time, Core Web Vitals, mobile app start time, API latency from client perspective.

Logs: detailed context and state

- Infrastructure logs: System logs from nodes, orchestration logs from Kubernetes, audit logs from cloud providers.

- Application logs: Structured logs with context (request ID, user ID, feature flags) to explain why a particular request failed or behaved unusually.

Security and compliance logs: Authentication failures, permission changes, configuration drifts.

Traces: end-to-end request paths

- Distributed traces: Spans across services, queues, and external dependencies that show where time is spent and where errors originate.

- Sampling strategy: Smart sampling to capture representative traces, plus guaranteed capture of traces for error or high-latency requests.

Events: changes and lifecycle triggers

- Deployment events: Releases, rollbacks, config changes, infrastructure changes (e.g., autoscaling events, node rotations).

- Feature flag events: Flag toggles that might correlate with behavior changes for specific user segments.

- Business events: Campaign launches, partner cutovers, large data imports.

Distributed systems add specific challenges like cascading failures and hidden dependencies:

- A slow or failing downstream service can cause retries and queue buildup upstream, increasing load and compounding the problem.

- Regional failovers can overload other regions if capacity assumptions are wrong.

- Network partitions and intermittent timeouts can cause inconsistent state or partial failures that are hard to see from a single layer.

This is where a platform like New Relic is helpful: you can ingest metrics, logs, traces, and events from infrastructure, applications, and user devices into a single data platform, then explore them with one query language. That unified view is what lets you reason about complex interactions instead of staring at siloed graphs.

How do you choose tools to support cloud monitoring best practices?

Choosing tools for cloud monitoring should start with your architecture and operational maturity, not with a features checklist. You want a stack that grows with you as you add services, regions, and teams, without exploding complexity.

When comparing tools, focus on your desired outcomes. A practical evaluation framework might include:

- Data unification: Does the platform actually unify metrics, logs, traces, and events into one datastore, or are they separate products behind the scenes?

- Integration ecosystem: How well does it integrate with your cloud providers (AWS, Azure, GCP), Kubernetes, serverless runtimes, and managed services? Are there first-class integrations for your critical components?

- Open standards support: Can you send OpenTelemetry data without extensive customization? How easy is it to standardize on a single telemetry pipeline?

- AI-assisted analysis: Are there practical features that help correlate related alerts, surface likely root causes, or summarize incident timelines, without trying to “automate away” engineers?

- Usability for engineers: Can on-call engineers quickly move from an alert to related traces, logs, and deployment events? Is the UI fast and predictable under pressure?

- Cost transparency: Is the pricing model clear? Can you control ingestion and retention without constant manual policing?

New Relic is built around a single data platform and query language, with broad integration coverage and features such as AIOps-assisted incident correlation. That combination helps teams reduce tool sprawl while improving the quality and speed of their operational decisions.

Implement cloud monitoring best practices with unified observability

Cloud monitoring best practices help you stay in flow and make decisions based on evidence, not assumptions. Tools matter, but the value comes from how you use them.

When you combine best practices with a unified platform, you can shorten incident investigations, align teams around shared views of system health, and support faster iteration without losing reliability.

To see how this looks on real data, request a New Relic demo and walk through your own environment with guidance from observability specialists. They’ll help you apply these practices to cut alert noise, troubleshoot faster, and operate more reliably at scale.

FAQs about cloud monitoring best practices

How long does it take to see value from cloud monitoring best practices?

You can usually see value within days if you focus on a few critical services and user journeys first. Connect your cloud accounts and Kubernetes clusters, instrument one or two key applications, and set up basic SLO-based alerts. As you expand coverage and standardize telemetry across more teams, you’ll see compounding benefits in faster incident resolution and more confident releases.

Can cloud monitoring best practices scale as environments grow?

Yes—if you design them with scale in mind. Practices like standard tagging, OpenTelemetry-based instrumentation, and unified data platforms are explicitly meant to support growing numbers of services, regions, and teams. As your environment expands, you rely more on conventions, automation, and service ownership models, not on manually maintained dashboards and ad hoc scripts.

What are the most common mistakes teams make when adopting cloud monitoring best practices?

Common mistakes include treating monitoring as a one-time tooling project, over-collecting low-value metrics, creating too many noisy alerts, and skipping standardization. Another pitfall is focusing only on component health without tying telemetry to user journeys and business impact. You avoid these by starting from real workflows and SLOs, unifying data, and evolving practices as part of your regular engineering work—not as an afterthought after major outages.

Les opinions exprimées sur ce blog sont celles de l'auteur et ne reflètent pas nécessairement celles de New Relic. Toutes les solutions proposées par l'auteur sont spécifiques à l'environnement et ne font pas partie des solutions commerciales ou du support proposés par New Relic. Veuillez nous rejoindre exclusivement sur l'Explorers Hub (discuss.newrelic.com) pour toute question et assistance concernant cet article de blog. Ce blog peut contenir des liens vers du contenu de sites tiers. En fournissant de tels liens, New Relic n'adopte, ne garantit, n'approuve ou n'approuve pas les informations, vues ou produits disponibles sur ces sites.