Quickstart

Integration Features

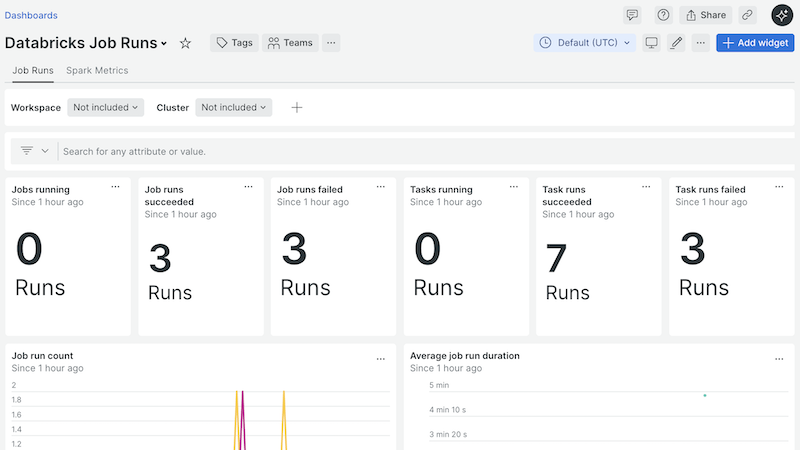

dashboards

Databricks Integration quickstart contains 6 dashboards. These interactive visualizations let you easily explore your data, understand context, and resolve problems faster.

Show MoreShow Less